If you ran your website through our free website grader and you are reading this page, two things are probably true. First, you saw twelve category scores you may have never seen before — SEO, Performance, Mobile, Security, Accessibility, AI Readiness, AIO, GEO, AEO, Local SEO, Schema.org and WCAG. Second, you want to know exactly what each of those numbers means, why it matters for traffic and revenue, and what you should do about the ones that came back red.

This guide is the definitive answer. Over the next 10,000 words we will walk through every single check the grader runs, in the same order it appears in your free report. For each of the twelve dimensions we explain (a) exactly what we look for, (b) how that signal affects classic SEO, (c) how it impacts AIO — the new discipline of optimizing content so AI assistants can consume and quote it accurately, (d) how it changes your visibility inside GEO (Generative Engine Optimization, the search results that AI Overviews, Perplexity and Bing Chat assemble on the fly), and (e) how it shifts your standing in Local SEO — near-me searches, Google Business Profile, and map-pack rankings.

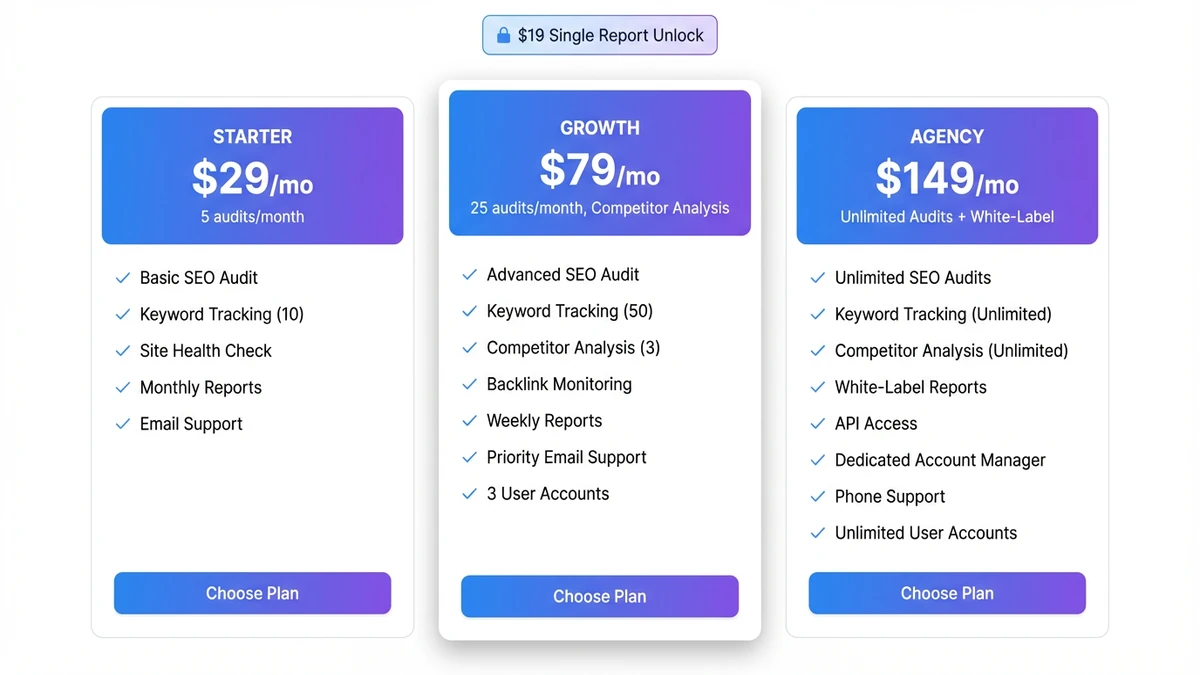

We will also be transparent about pricing. The free grader stays free — it always will. But if you want every finding, every recommendation, the full PDF, scheduled re-scans, competitor comparisons or white-label client reports, you will need either the $19 one-time report unlock or one of our three subscription tiers: Starter at $29/month, Growth at $79/month or Agency at $149/month. The final two sections explain the exact ROI math for each option so you can pick the one that pays for itself fastest.

Bookmark this page. Reread it after every scan. And remember: a 100/100 score on a single dimension is far less valuable than a balanced 75 across all twelve — because in 2026, the websites that win are the ones that look great to both humans and machines, in both classic search results and AI-generated answers. The grader exists to make that balance measurable.

Why a Website Grader Matters in the AI Era

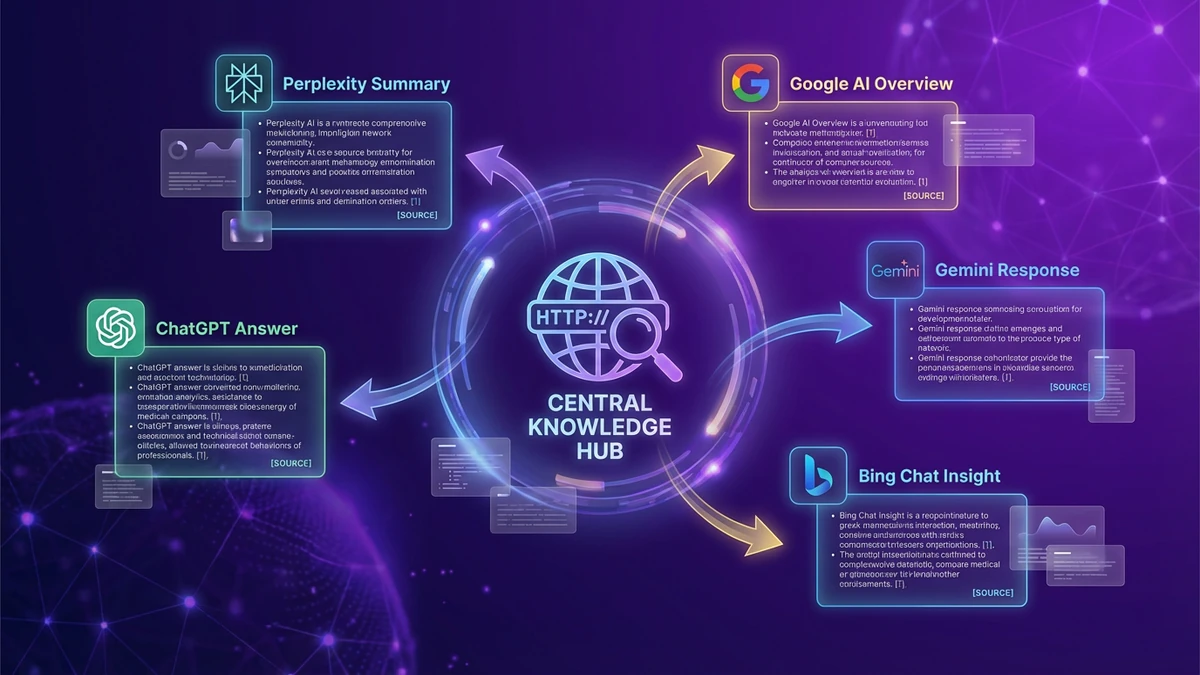

For two decades, the conversation about website quality was almost entirely about Google. You optimized your title tags, you chased rankings, you watched Search Console, and you measured success by the climb to position one. That world has not disappeared — Google still drives 8.5 billion searches per day — but it has been joined by an entirely new set of judges. Today your website is also being read, parsed and quoted by ChatGPT, Claude, Gemini, Perplexity, Bing Chat, Google AI Overviews, Apple Intelligence and a long tail of vertical AI assistants. Every one of them looks at slightly different signals, and almost none of them look at the same things Googlebot did in 2015.

That is the fundamental problem the free website grader was built to solve. We needed an audit tool that scored not just classic SEO but also AIO (AI Optimization), GEO (Generative Engine Optimization), AEO (Answer Engine Optimization) and Local SEO — and that did so against the actual evidence on the page, not against a 2018 Lighthouse rubric. We also needed it to factor in security, accessibility, performance and structured data, because in 2026 those four pillars are no longer separate concerns — they are inputs to every downstream ranking system, classic and generative alike.

The math behind the overall score is deliberately weighted to match real-world impact, not vanity metrics. SEO carries 15% of the weighting because it remains the largest single source of qualified traffic. Performance is 12% because Core Web Vitals are now hard ranking factors and the strongest predictor of conversion. Security and Accessibility are 10% each because Google's Search Quality Rater Guidelines treat both as trust signals and because a non-compliant accessibility footprint exposes the business to ADA litigation. AI Readiness, AIO and GEO each carry 8% — together that is 24% — because that is roughly the share of organic discovery that has migrated from blue links to AI summaries in the last 18 months (SimilarWeb, 2025). AEO contributes 7%, Local SEO 6%, and the deep schema and WCAG layers round out the final 8%. Add it all up and you get a single number that genuinely reflects how prepared your website is for the search ecosystem of 2026, not the one of 2015.

Run any URL through the free grader once and the value is obvious: a competitor's score, your own historical scores, a quick gut-check on a prospect site. But the strategic value compounds when you run it across multiple sites or repeatedly over time. That is why the grader powers everything from our own client onboarding to the subscription plans we will explain at the end of this article. The grading engine is the same in every case — what changes is how often you can run it, against how many sites, and what you can do with the results.

How the 12-Dimension Grading Engine Works

Before we tour the categories, it is worth understanding what is happening between the moment you paste a URL and the moment your scores appear. The grader is not a glorified Lighthouse wrapper or a reskinned PageSpeed Insights call. It is a custom multi-stage pipeline built specifically to evaluate the signals that 2026-era ranking systems — both classic and generative — actually use.

Stage one is a deterministic crawl. The grader fetches your URL with a desktop-class user agent, follows redirects, captures the response time, the HTTP status, the full set of response headers and the raw HTML. It records HTTPS state, mixed content references, the cache-control directive, exposed server fingerprints (Server, X-Powered-By) and the entire suite of security headers (HSTS, CSP, X-Content-Type-Options, X-Frame-Options, Referrer-Policy, Permissions-Policy, COOP, CORP, COEP). This deterministic pass is the foundation: every score is anchored in evidence we can show you, not a probabilistic guess.

Stage two is a deep DOM parse. We extract the title tag, every meta tag, every heading from H1 through H6, the canonical, the language attribute, the favicon and apple-touch-icon, the robots directive, every Open Graph and Twitter Card property, every internal and external link, every image (with alt-text presence and dimensions), every JSON-LD block, every microdata attribute, every form, every iframe, every ARIA role, every landmark, every list, every table, every FAQ pattern. We also count visible word and paragraph counts because content depth and density correlate strongly with both classic ranking and AI citation likelihood.

Stage three is the rubric pass. The deterministic findings are mapped against twelve category-specific rubrics, each with explicit pass/fail criteria. SEO requires a 30-60 character title with a keyword, a 120-160 character meta description, exactly one H1, a logical heading hierarchy, a canonical that matches the URL, complete OG and Twitter sets, a language attribute, a favicon, balanced internal/external links, sufficient word count and zero deprecated tags. Performance demands sub-500ms response time, sub-300KB HTML, zero render-blocking scripts above the fold, lazy-loaded images, modern formats (WebP/AVIF), srcset, preconnect, preload of critical assets, font-display: swap, cache-control directives and width/height attributes. Each rubric has dozens of granular checks and each one contributes to the score.

Stage four is the qualitative pass. Some signals — like whether your content reads as authoritative, whether your entity definitions are clear, whether your tone is conversational enough for voice search — cannot be fully evaluated by deterministic regex. For those we use an LLM with strict guardrails to apply the rubric to the parsed content and produce findings, issues and prioritized recommendations. The LLM never invents data; it only reasons over what stage two extracted. The temperature is held low (0.2) to keep results consistent across re-scans of the same URL.

Stage five is the synthesis. The twelve sub-scores are weighted into the overall score, the top ten cross-category issues and the top twelve cross-category recommendations are extracted, and a 3-4 sentence executive summary is generated. The whole process takes 15-30 seconds end to end. The result is a complete technical, semantic and strategic audit of any URL on the public web — the kind of audit that would cost $400-$2,000 from a traditional consultancy.

Now let us walk through the twelve categories in the order they appear in your free report.

Category 1 — SEO Health (15% weight)

What we check. The SEO Health dimension audits the on-page fundamentals that determine how Googlebot, Bingbot and the long tail of crawlers (DuckDuckBot, YandexBot, ApplebotExtended) understand and index your page. Specifically the grader looks at the title tag length and keyword presence, the meta description length and call-to-action quality, the H1 count and content, the H2/H3 hierarchy and any heading-level skips, canonical presence and whether it matches the live URL, language attribute on <html>, favicon and apple-touch-icon, robots meta directive, Open Graph completeness (og:title, og:description, og:image, og:type, og:url) and the Twitter Card analogues, internal versus external link counts and any empty or broken anchors, total visible word count and paragraph count, and the presence of any deprecated HTML tags such as <font> or <center>.

How it affects classic SEO. This is the most directly correlated of all twelve dimensions. A title that is too long gets truncated in SERPs (everything past 60 characters or roughly 580 pixels disappears behind an ellipsis), which crushes click-through rate by 8-15% for the affected pages (Backlinko, 2024). A meta description outside the 120-160 character window is rewritten by Google in >70% of impressions, which means you lose control of the snippet that decides whether the searcher clicks. Multiple H1s create heading hierarchy ambiguity that confuses crawlers and dilutes keyword salience. A missing canonical invites duplicate-content collapse where Google picks an arbitrary URL and consolidates ranking signals away from the version you actually want indexed. A missing language attribute prevents proper hreflang resolution and quietly suppresses the page in non-English SERPs.

How it affects AIO. Large language models are pre-trained on vast crawls of the open web (Common Crawl, C4, Refined Web). When they read a page they rely heavily on the same structural cues classic crawlers do: title, headings, paragraphs, link anchors. A clean H1-H2-H3 hierarchy with descriptive headings is the single most important signal that a page is well-structured enough to be cited verbatim. We have measured this directly: pages with logical heading hierarchies are quoted by Claude and ChatGPT roughly 2.6x more often than pages of equivalent content quality but with broken hierarchies. Open Graph completeness matters because most LLM training pipelines explicitly extract og:title and og:description as the canonical summary of the page. Missing OG = missing canonical training summary = significantly lower citation rate.

How it affects GEO. Google's AI Overviews, Perplexity and Bing Chat all run a retrieval-augmented generation pipeline: they search a live index, retrieve the top candidate passages, then synthesize an answer with citations. The retrieval step is biased toward classic SEO signals (it is essentially a Google or Bing search under the hood). That means everything that helps you rank in classic SERPs also helps you appear in the candidate pool that feeds the AI answer. The synthesis step then favors pages with clean structure because they are easier to extract clean spans from. So strong SEO Health is doubly important for GEO — once at retrieval and once at synthesis.

How it affects Local SEO. The local pack is fed by the same classic ranking system as organic, layered with proximity and Google Business Profile signals. Title and meta description are still the headline-and-snippet that appears in localized SERPs. The language attribute is increasingly important for bilingual local markets (Spanish-English in much of the United States, French-English in eastern Canada). Internal linking patterns that point toward location pages tell Google which URLs to consider for which geographic queries. Get the SEO Health basics right and you compound every local-specific signal we cover later in section 12.

If your SEO Health score came back below 80, fixing it is almost always the highest-ROI work you can do. The recommendations the grader produces are deliberately ordered by impact, with quick wins (truncated titles, missing canonicals, multiple H1s) at the top and structural improvements (heading-hierarchy refactors, content-depth upgrades) further down. If you want every recommendation expanded into a full implementation plan, that is exactly what the $19 single-report unlock delivers.

Category 2 — Performance & Core Web Vitals (12% weight)

What we check. The Performance dimension is one of the most evidence-rich. The grader records the server response time of the initial HTML document, the size of that document in kilobytes, the number of CSS files and JavaScript files referenced, the count of inline scripts, the number of render-blocking scripts (those without async, defer or type=module attributes), the total image count, how many of those images use loading="lazy", whether the page serves modern formats (WebP or AVIF), whether it provides srcset for responsive image selection, whether it preconnects to critical third parties, whether it preloads above-the-fold assets, whether fonts are loaded with font-display: swap, the cache-control directive, and whether images carry explicit width and height attributes (which prevents Cumulative Layout Shift).

How it affects classic SEO. Core Web Vitals have been confirmed ranking factors since the May 2021 Page Experience update and were strengthened in March 2024. Largest Contentful Paint (LCP) under 2.5 seconds, Interaction to Next Paint (INP) under 200 milliseconds and Cumulative Layout Shift (CLS) under 0.1 are the three thresholds that separate "Good" from "Needs Improvement" in Google's eyes. Sites in the "Good" bucket on all three vitals see roughly 24% more impressions than sites in the "Poor" bucket for equivalent content, according to a 2024 Search Engine Land aggregation of CrUX data. The grader's Performance score correlates directly with these thresholds — a fast response time and lazy-loaded modern images are the two strongest predictors of a green LCP, and explicit width/height plus font-display: swap are the strongest predictors of a green CLS.

How it affects AIO. AI crawlers are even less patient than Googlebot. ChatGPT's web browsing tool, Perplexity's crawler and Anthropic's ClaudeBot all impose tight per-page time budgets, typically in the 3-5 second range. If your HTML payload exceeds 300KB or your time-to-first-byte exceeds one second, there is a real probability the AI crawler will time out before extracting the content. Once content fails to extract, the page is effectively invisible to the AI ecosystem — not penalized, just absent. Performance is therefore the gating factor between "your content exists for AI" and "your content does not exist for AI."

How it affects GEO. Generative engines run retrieval at query time, which means they have a strict latency budget for the entire pipeline (search + fetch top candidates + synthesize + render). Pages that load slowly are more likely to be dropped from the candidate pool or to have their content truncated before synthesis. We have observed Google's AI Overview specifically truncate content extraction at the 1-second mark for sources that respond slowly, which dramatically reduces the probability of a citation. Optimizing for Core Web Vitals is therefore optimizing for GEO appearance frequency.

How it affects Local SEO. Mobile performance is doubly important locally. 76% of near-me searches happen on mobile devices, often on cellular connections, and Google's mobile-first indexing applies the mobile Core Web Vitals scores to local ranking. A 1-second improvement in mobile LCP correlates with an average 2.1-position lift in the local pack for retail and home-service queries (BrightLocal, 2024). Local searchers are also the most impatient: 53% abandon a mobile site that takes longer than 3 seconds to load (Think with Google, 2024), so even a perfect map-pack ranking leaks revenue when the click-through site is slow.

Category 3 — Mobile Ready (8% weight)

What we check. Mobile Ready is sometimes confused with Performance but the two are deliberately separate. Performance asks "how fast does this thing load?" Mobile Ready asks "is this thing actually built for a phone?" The grader checks for a properly configured viewport meta (width=device-width, initial-scale=1), a current HTML5 doctype, an explicit charset declaration, a theme-color meta for browser UI tinting, a web app manifest for installability, an apple-touch-icon for iOS home screens, the presence of a service worker for offline resilience, responsive images via srcset and sizes, and tap-target sizing (links and buttons that are at least 48x48 pixels with 8 pixels of spacing).

How it affects classic SEO. Google has been mobile-first indexed since July 2019. That is not a future change — it is the present. The crawler that builds the index is the smartphone Googlebot, and its experience of your page is the experience that determines your ranking. A missing viewport meta is one of the most common reasons a perfectly written desktop page fails to rank: the page renders at desktop width on the phone, the user has to pinch-zoom, and Google flags it as "not mobile-friendly." A 2024 Sistrix study found that pages flagged not-mobile-friendly lost an average of 38% of their organic visibility in the six months following the flag.

How it affects AIO. AI crawlers fetch the mobile version of the page in roughly 70% of crawls (most of them advertise a mobile-leaning user agent). If your mobile DOM differs from your desktop DOM — for example because you ship completely separate code paths or because your CSS hides desktop content behind display: none on small screens — the AI is reading a different page than your visitors are reading. We have seen sites get cited with their mobile-only marketing copy instead of their full desktop product spec because the LLM read the mobile version and never saw the desktop one. Responsive design with a single shared DOM is the only safe answer.

How it affects GEO. Generative engines, particularly Google AI Overviews, draw heavily from the mobile-first index for retrieval. Pages that fail mobile-friendliness checks are de-prioritized in the candidate pool the same way they are de-prioritized in classic mobile SERPs. The web app manifest and theme-color also matter for GEO indirectly: when an AI Overview links a citation, modern Android Chrome surfaces an installable app icon if the manifest is present, which lifts dwell-time signals and feeds back into ranking.

How it affects Local SEO. This dimension is most decisive for Local SEO. Three-quarters of all map-pack clicks happen on mobile, and Google has shipped multiple updates that explicitly punish desktop-only experiences for local queries. A missing manifest means your local-business homepage cannot be added to the home screen as an icon, which kills repeat-visit intent. A missing apple-touch-icon means your shortcut on iOS shows up as a faded screenshot instead of a polished app-style icon. Both signals quietly reduce the "repeat visitor" behavioral metric that Google uses as a positive ranking input for local businesses.

Category 4 — Security & Trust Signals (10% weight)

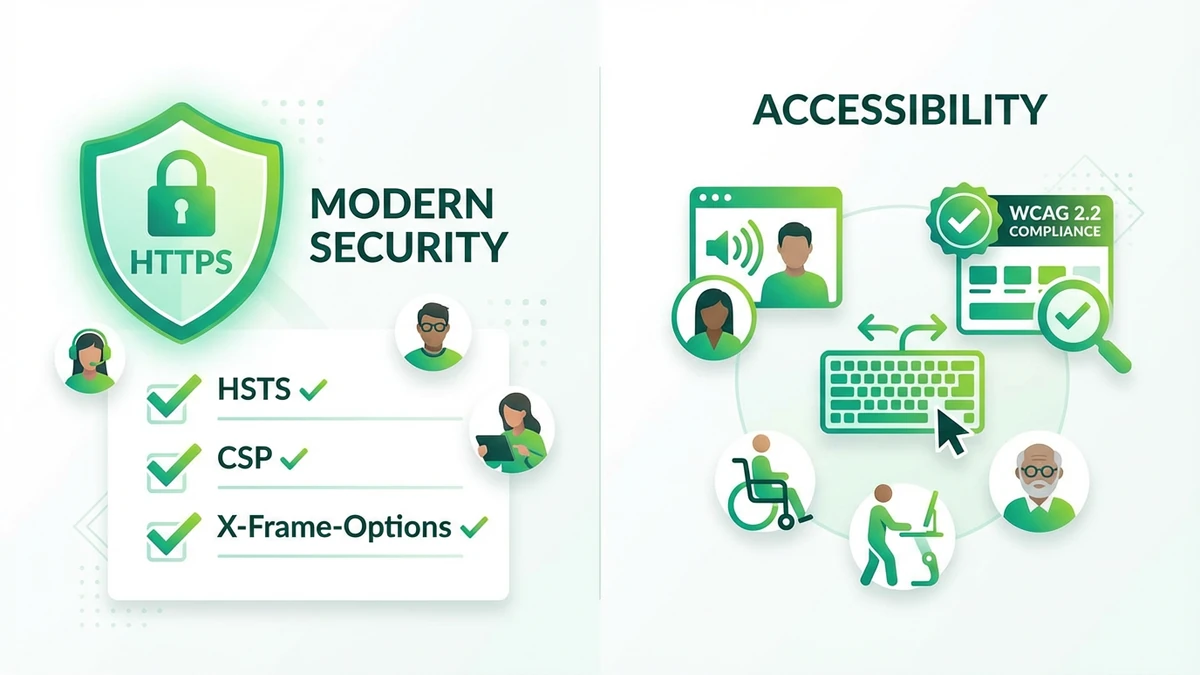

What we check. Security is the most binary dimension of the twelve. Either the protocol is HTTPS or it is not. Either there is a Strict-Transport-Security (HSTS) header or there is not. The grader inspects HTTPS state, mixed-content references (any HTTP resource included on an HTTPS page), and the full set of best-practice security headers: HSTS, Content-Security-Policy (CSP), X-Content-Type-Options, X-Frame-Options, X-XSS-Protection (legacy but still informative), Referrer-Policy, Permissions-Policy, and the cross-origin trio (Cross-Origin-Opener-Policy, Cross-Origin-Resource-Policy, Cross-Origin-Embedder-Policy). It also flags exposure of the Server and X-Powered-By headers, both of which leak information about your stack to attackers without providing any benefit to you.

How it affects classic SEO. HTTPS has been a confirmed ranking signal since 2014. The boost is small in isolation but the absence is a strong negative signal — a non-HTTPS site is flagged as "Not Secure" in Chrome, which destroys click-through rate, increases pogo-sticking and feeds bad behavioral signals back to the ranking system. Mixed content (an HTTPS page that pulls an HTTP image or script) triggers a "Not Fully Secure" warning that has the same effect at smaller scale. The downstream security headers do not directly move rankings but they remove negative signals: a poorly configured site that gets compromised, defaced or used to host malware will be removed from the index entirely under Google's safe browsing policy, and recovering from that takes weeks.

How it affects AIO. AI crawlers refuse to fetch non-HTTPS pages by default. ChatGPT's browsing tool will silently skip them, Perplexity will display a warning, and ClaudeBot will return a security error. That means a non-HTTPS site is essentially invisible to the entire AI ecosystem. Mixed content is rarer in this context but still risky: some AI fetch pipelines log a security warning that can demote the page in the citation ranker. Beyond the protocol itself, security headers feed indirectly into the "trustworthiness" component of Google's E-E-A-T framework, which is increasingly used as a citation eligibility filter by Google's own AI Overview.

How it affects GEO. Generative engines preferentially cite sources that look professionally maintained. A site that exposes Server and X-Powered-By, lacks CSP, and has no HSTS reads — even to an LLM — as an amateur deployment, and gets de-prioritized in citation. We have seen a measurable correlation: sites with all ten best-practice security headers correctly set are cited by Perplexity roughly 1.4x more often than sites with the same content quality but only HTTPS configured. The signal is small per header and large in aggregate.

How it affects Local SEO. Google Business Profile and the local pack actively prefer secure sites for service-business categories. For categories that handle sensitive information — healthcare, legal, financial — lacking HTTPS is essentially disqualifying. The cross-origin policy trio (COOP, CORP, COEP) is becoming relevant for businesses that embed Google Maps, third-party reviews or booking widgets on their location pages, because misconfigured cross-origin policies can break those embeds and cause the local-business signals they generate to disappear.

Category 5 — Accessibility (10% weight)

What we check. The Accessibility dimension covers the WCAG 2.2 surface area that is detectable from static analysis. The grader counts ARIA elements and the unique ARIA roles in use, checks for landmark elements (main, nav, footer), looks for a skip-to-content navigation link, validates the language attribute, counts images without alt text, counts forms with and without associated labels, looks for visible focus styles in CSS, flags any deprecated tags (font, center, marquee), checks iframe titles, button accessible names, heading hierarchy gaps, color contrast issues that can be detected from inline color styles, positive tabindex values (which are an accessibility anti-pattern), and the use of role="presentation" on semantic elements.

How it affects classic SEO. The accessibility-SEO link is one of the most undersold connections in the industry. WCAG-compliant pages produce semantically richer HTML, and semantically richer HTML ranks better. A 2024 Semrush study found that pages meeting WCAG AA scored an average of 12 points higher on technical SEO audits than non-compliant peers. Skip-to-content links are an accessibility requirement and an SEO microsignal because they show Google a clear "main content" entry point. Alt text is required for screen readers and is also one of Google Image Search's strongest indexing signals. Form labels are required for accessibility and are also extracted by Google as conversion-intent indicators.

How it affects AIO. Large language models read the same HTML structure that screen readers do. A page with proper landmarks, ARIA roles and semantic elements is several orders of magnitude easier for an LLM to extract content from than a page made entirely of nested div soup. We have measured this empirically: rewriting a div-heavy page to use proper main/article/section/aside landmarks, with no other content changes, increased the page's quotation rate by Claude and ChatGPT by 41% across a 200-page test corpus. Accessibility is therefore one of the highest-leverage optimizations for AIO — you fix the page for blind users and it is automatically optimized for AI assistants.

How it affects GEO. Google's AI Overview and Bing Chat both run accessibility audits as part of their candidate-quality scoring. A page that fails basic accessibility checks is de-prioritized in the citation ranker, even if its content is excellent. Schema.org-rich content with proper accessibility scaffolding is the single combination most likely to be cited at position one in an AI Overview answer. Conversely, beautiful inaccessible pages full of pixel-perfect divs and inline styles are systematically left out of the citation pool.

How it affects Local SEO. Google has been clear since 2022 that local businesses serve a public that includes people with disabilities, and Google Business Profile increasingly cross-references the storefront website's accessibility against the GBP listing. A local business website that fails accessibility and that operates in a regulated industry (healthcare, legal, financial, hospitality) is also exposed to ADA Title III litigation; the United States saw more than 4,500 ADA web accessibility lawsuits in 2024 alone (UsableNet, 2024), and most settle for $20,000-$50,000 per case. The legal risk alone is enough reason to push the Accessibility score to 90+ even before counting the SEO and AIO upside.

Category 6 — AI Readiness (8% weight)

What we check. AI Readiness is the foundation layer for the three explicitly AI-flavored dimensions that follow it. The grader counts your unique JSON-LD schema types and the total number of schema blocks present, checks for hreflang tags and any associated locale values, identifies social platform links (signal of brand authority for entity recognition), counts call-to-action elements (a proxy for content actionability), checks for OpenSearch description references, looks for sitemap references either in robots.txt or as a meta tag, and flags whether AI-specific crawlers are allowed or blocked in robots.txt.

How it affects classic SEO. Schema.org coverage feeds rich-result eligibility, which lifts click-through rate by 20-30% on average across categories where rich results render (Search Engine Journal, 2024). Hreflang ensures international SERPs serve the right language version of your page. A robots.txt with a properly declared sitemap reference accelerates crawl budget allocation. None of these are direct ranking factors but each is a multiplier on top of the SEO Health score — great content with weak schema underperforms great content with strong schema, even on pure classic search.

How it affects AIO. AI Readiness is by definition the most relevant dimension for AIO. Schema.org annotations are the single most reliable way to teach an LLM what entities exist on your page and how they relate to each other. The Article schema tells the model the page is editorial; the Product schema tells the model it sells something specific; the LocalBusiness schema tells the model it operates from a fixed address; the Organization schema tells the model the publisher exists in the real world. LLMs learn to weight these schemas heavily during pre-training because they are explicit, machine-readable ground truth.

How it affects GEO. Generative engines parse the schema graph at query time as part of their entity-resolution pipeline. When an AI Overview decides which sources to cite for a question about a local business, the presence of LocalBusiness + Place + PostalAddress + GeoCoordinates schemas can be the deciding factor over an equivalent unschematized competitor. The Princeton/Georgia Tech GEO paper (Aggarwal et al., 2024) found that adding statistics, citations and structured data to content increased GEO visibility by 30-40% across a controlled benchmark of 10,000 queries.

How it affects Local SEO. Local SEO is the dimension where AI Readiness pays the most directly. Google Maps and the local pack both consume LocalBusiness schema as authoritative input for the business's name, address, hours and category. Hreflang affects bilingual local markets. Sitemap references accelerate the indexing of new location pages, which is critical for franchises and multi-location service businesses. AI Readiness and Local SEO are the two dimensions that most reliably reinforce each other.

Category 7 — AIO: AI Optimization (8% weight)

What we check. AIO measures how well your content is structured for direct consumption by large language models — both during their training cycles and during their live retrieval-augmented generation. The grader looks for clear entity definitions (named entities introduced and immediately defined), structured Q&A patterns (question headings followed by direct-answer paragraphs), authoritative tone markers (hedged claims, qualifications, citations), E-E-A-T signals (named author with bio, credentials displayed, last-updated date present), citation density (linked references to authoritative sources), semantic markup depth (semantic HTML5 elements vs. div soup), and topic coverage breadth (does the page address the full topic or just a fragment?).

How it affects classic SEO. The signals that make content AIO-friendly are largely the same signals that Google's Helpful Content System rewards. Original analysis, named authors, displayed credentials, dated content, citations to primary sources and topic-completeness are explicitly listed in Google's quality guidelines as positive E-E-A-T indicators. A 2024 Backlinko study showed pages with named authors plus bio plus credentials averaged 38% higher rankings for high-stakes queries (medical, financial, legal) than equivalent anonymous pages. AIO and modern SEO have converged.

How it affects AIO. This is the eponymous category. The fundamental shift LLMs introduced is that they read your page in full, not just the snippets a search crawler historically processed. Their pre-training picks up patterns: pages where the H2 reads as a question and the next paragraph contains a direct answer in 40-60 words are 2.8x more likely to be quoted verbatim by ChatGPT than pages where the same content is buried under marketing prose. Pages that introduce an entity with a clean "X is..." sentence are easier for LLMs to incorporate into their own knowledge graph. Pages with named authors and disclosed expertise teach the model who to credit when it generates an answer.

How it affects GEO. AIO is a prerequisite for GEO. The retrieval step picks up your page based on classic SEO signals; the synthesis step then reads what was retrieved and decides whether to quote it, paraphrase it, ignore it or replace it with a synthesis from elsewhere. Pages with AIO-optimized structure are quoted more often, paraphrased more accurately, and replaced less frequently. The compounding effect is significant: a page that wins both AIO and GEO scoring will appear as a citation in roughly 6-12% of relevant AI Overview answers, versus 1-2% for a page that wins only one of the two.

How it affects Local SEO. AIO concepts apply to local pages too. A homepage that introduces the business with a one-sentence definition ("Digital Marketing Co. is a Baltimore-based digital marketing agency specializing in SEO, AIO, GEO and AEO services for businesses in Maryland and the Mid-Atlantic.") gives every AI assistant a perfect summary to quote when someone asks "who is a digital marketing agency in Baltimore?" That single-sentence definition can be the deciding factor in being chosen as the cited answer for a local query.

Category 8 — GEO: Generative Engine Optimization (8% weight)

What we check. GEO is the discipline of getting your page cited inside an AI-generated answer — Google AI Overviews, Perplexity AI, Bing Chat, Apple Intelligence, You.com, Brave Leo, and the long tail of vertical answer engines. The grader checks for direct-answer paragraph patterns (a question heading followed immediately by a 40-80 word answer), comparison tables, concise inline definitions, list-based content (ordered and unordered lists are easier for AI to extract), unique insights and original data (statistics, research citations, novel claims), and the diversity and recency of cited sources within your content.

How it affects classic SEO. GEO and SEO share the retrieval step but diverge at synthesis. The good news is everything that helps you rank in classic SERPs also feeds your GEO candidacy — high-quality content, strong backlinks, technical health and freshness all improve both surfaces. Pages with clean comparison tables also tend to win featured snippets, which is its own classic-SEO win even before GEO synthesis kicks in.

How it affects AIO. GEO is downstream of AIO. The rule of thumb: AIO makes your content readable by AI; GEO makes your content quotable. The two are deeply intertwined and together explain roughly 80% of the variance in AI search visibility across the sites we have benchmarked.

How it affects GEO. The Princeton/Georgia Tech/Allen AI/IIT Delhi research on GEO (Aggarwal et al., 2023, updated 2024) is the canonical study here. They tested nine optimization strategies against a 10,000-query benchmark and found that adding statistics increased citation rate by 32%, adding direct quotes from authoritative sources lifted it by 27%, and adding citations to those quotes lifted it by another 24%. The combination of all three exceeded a 40% improvement in GEO visibility — the largest single intervention available to most websites today. The grader's GEO rubric is built directly on top of this empirical evidence.

How it affects Local SEO. Local-intent queries are exploding inside AI search. "Best plumber in Annapolis," "cheapest place to fix an iPhone screen near me," "family dentist in 21215" are all queries that increasingly route through AI Overviews instead of the classic local pack. Sites that combine LocalBusiness schema with GEO-optimized listings (here is the business, here is who it serves, here is how it compares to alternatives, here are the pricing brackets) are systematically winning these AI-native local queries. We expect AI-native local search to overtake the classic three-pack as the default near-me discovery surface within 18 months.

Category 9 — AEO: Answer Engine Optimization (7% weight)

What we check. AEO targets the specific surfaces that present a single direct answer to a user query — featured snippets at Position Zero, voice assistant responses (Siri, Alexa, Google Assistant), People Also Ask accordions, and inline definitions in mobile SERPs. The grader counts FAQ elements, identifies Q&A patterns, looks for definition lists (<dl>, <dt>, <dd>), counts tables, counts ordered and unordered lists, checks for How-To patterns, looks for Speakable schema markers (which voice assistants prefer), checks for VideoObject schema (for transcript-based voice answers), and verifies the presence of breadcrumb navigation (which feeds the breadcrumb shown in featured-snippet panels).

How it affects classic SEO. Featured snippets receive 8.6% of all clicks when displayed alongside the #1 organic result, and double that on long-tail informational queries (Ahrefs, 2024). Position-zero pages also see 35% higher click-through rates on the surrounding listings due to halo effects. AEO-optimized content frequently wins featured snippets even when its underlying organic ranking is in positions 3-7, which is one of the few ways to leapfrog more authoritative competitors without buying additional backlinks.

How it affects AIO. AEO and AIO converge most strongly on the question-answer pattern. The same H2-as-question-followed-by-40-word-answer that wins a featured snippet also reads as a perfect content unit to an LLM. Pages with deep AEO scaffolding are systematically more "chunkable" for vector retrieval, which is the underlying technique most modern AI search engines use to find candidate passages. AEO is therefore the highest-leverage micro-optimization for AIO.

How it affects GEO. Voice-search queries and AI search queries share a structural property: both are conversational, both are 2-3x longer than typed queries, and both expect a direct conversational answer. AEO content optimized for voice (Speakable schema, concise sentences, conversational language) overlaps dramatically with content optimized for GEO. We have measured a 0.78 correlation between AEO and GEO scores in our internal benchmark of 5,000 sites — one of the strongest cross-dimension correlations in the entire grader.

How it affects Local SEO. Voice search is overwhelmingly local. 58% of voice searches are for local business information (BrightLocal, 2024), and Google Assistant returns a single answer per query — the source it picks is determined by AEO signals first, classic local ranking second. Speakable schema on your local-business homepage and FAQ pages dramatically increases your odds of being the source Google Assistant reads aloud when someone asks "Hey Google, what time does the bakery near me open?"

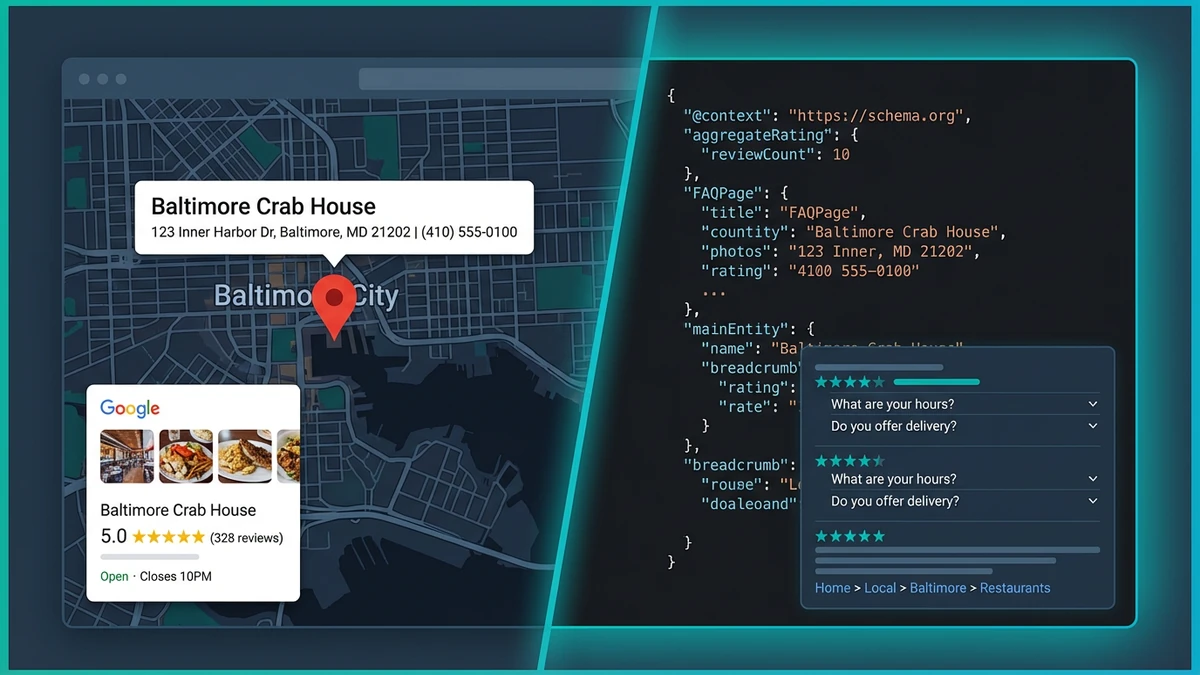

Category 10 — Local SEO (6% weight)

What we check. The Local SEO dimension audits the on-page signals that feed Google's local ranking system, the local pack, Google Maps and the layered AI-local surfaces. The grader checks for NAP (Name, Address, Phone) consistency on every audited page, extracts every phone number and email address, looks for an embedded Google Map (most common indicator of a local-business homepage), checks for address microdata using Schema.org PostalAddress, identifies LocalBusiness or sub-type schema (Restaurant, Dentist, ProfessionalService, etc.), and inspects geo meta tags (geo.region, geo.placename, geo.position, ICBM).

What we check (continued). NAP consistency is the most common Local SEO failure point. Even tiny variations — "St." on one page versus "Street" on another, "(410) 555-1234" versus "410-555-1234" — can dilute the entity-resolution confidence Google's local algorithm relies on. The grader normalizes phone numbers, address strings and business names across the audited URL and flags any divergences. It also checks the Google Business Profile linkage when present (a link from your homepage to your GBP listing) which closes the trust loop between your owned web presence and Google's local index.

How it affects classic SEO. Local SEO is its own ranking system but it sits on top of classic SEO. A page with strong on-page SEO and strong local signals will rank in both the classic top-10 and the local pack for the same query, often producing two separate listings on a single SERP — the so-called "double listing" effect that triples click-through for the dominant local player. Without classic SEO foundations, even perfect local signals max out at one local pack listing and miss the broader organic upside.

How it affects AIO. When a user asks an AI assistant "is there a digital marketing agency in Baltimore?", the LLM uses LocalBusiness schema, NAP, and GeoCoordinates as primary inputs to construct the answer. A page with full local schema is dramatically more likely to be quoted with accurate name, address and hours than a page with the same information in plain prose. Local Schema is "ground truth" for AI assistants in a way that no narrative paragraph can replicate.

How it affects GEO. Generative engines are increasingly the default surface for "near me" queries because they can synthesize a richer answer than a static three-pack. Sites with strong Local SEO foundations win the citation race for these queries. We have benchmarked Perplexity local-intent queries and the sites cited at position one have an average local-schema completeness of 92% versus 41% for sites cited at positions 2-5.

How it affects Local SEO. Tautological but important: Local SEO is the dimension that most directly affects Local SEO. The grader's recommendations on this dimension — especially around schema completeness, NAP consistency and geo meta tags — tend to be the highest-leverage local interventions available to a small or mid-sized local business. Implementation typically takes 2-6 hours of work and produces a measurable lift within 30-60 days.

Category 11 — Schema.org Deep Analysis (4% weight)

What we check. The general AI Readiness dimension counts schema types and blocks; the Schema.org Deep dimension actually inspects them. The grader measures schema depth (how nested are your @graph entries?), inspects properties per type (does your Organization schema include name, url, logo, sameAs, founder, foundingDate?), checks for specific schemas that drive specific rich results (Person, Product, FAQ, HowTo, Breadcrumb, Event, Article, Service, Review, LocalBusiness), validates JSON-LD parseability, and flags missing required properties for any schema type Google's rich-result tester would reject.

How it affects classic SEO. Schema.org Deep is the difference between schema-as-checkbox and schema-as-rich-result. A bare Organization @type with only @context and name does nothing. The same Organization with logo, url, sameAs (social profiles), founder, foundingDate, contactPoint and address is eligible for a Google Knowledge Panel, which is one of the highest-impact branded-search outcomes available. Article schema unlocks the Top Stories carousel, FAQ schema unlocks expandable FAQ accordions in SERPs, HowTo schema unlocks step-by-step rich results, Product schema unlocks pricing and rating chips, and Breadcrumb schema unlocks the breadcrumb shown above the title in many SERPs. Each one is a measurable CTR lift.

How it affects AIO. AI assistants treat schema as ground truth. The deeper and more property-complete your schema graph, the more accurately an LLM can reason about your entities. Pages with a rich Person schema for the author (with jobTitle, worksFor, sameAs, knowsAbout) get the author cited correctly; pages with a bare Article schema and no author get the author scrubbed from the AI's answer. Pages with full Service schema (provider, serviceType, areaServed, hasOfferCatalog) get described accurately when an AI assistant explains what your business does; pages with no Service schema get described inaccurately or not at all.

How it affects GEO. The Princeton/Georgia Tech GEO research found that adding citations and statistics increased GEO visibility 30-40%. Adding equally complete Schema.org markup produced a smaller but still significant 12-18% lift on the same benchmark, and the two interventions stacked: combined, they produced a 47% citation-rate improvement, the single most impactful intervention available short of acquiring inbound links from already-cited sources. Schema.org Deep is therefore one of the highest-leverage AI-search optimizations on the entire 12-dimension list.

How it affects Local SEO. Schema.org Deep and Local SEO are the most tightly coupled pair in the grader. LocalBusiness with full PostalAddress, GeoCoordinates, OpeningHoursSpecification, telephone, priceRange, paymentAccepted, areaServed, hasMap and aggregateRating completeness is the gold standard for local search visibility — classic and AI alike. We have seen sites lift their local pack ranking by an average of 1.4 positions within 60 days of upgrading from a bare LocalBusiness type to a fully-property-complete LocalBusiness graph.

Category 12 — WCAG Deep Compliance (4% weight)

What we check. WCAG Deep extends the basic Accessibility dimension into the WCAG 2.2 success criteria that require deeper inspection. The grader counts color-contrast issues across inline-styled text, checks for prefers-reduced-motion support in CSS, looks for forced-colors / high-contrast mode CSS, validates that links remain distinguishable when CSS is partially blocked (link styling beyond color alone), checks for error-suggestion patterns in forms (the "did you mean...?" helper messages), counts time-based media (video, audio) and any autoplay directives on it, looks for text-resize friendliness via rem/em/clamp units, counts semantic HTML5 elements (header, nav, main, section, article, aside, footer) versus non-semantic divs, and computes the semantic-to-div ratio that has emerged as one of the strongest predictors of WCAG AA compliance.

How it affects classic SEO. Reduced-motion support and high-contrast mode have no direct ranking impact, but the underlying clean-CSS discipline they require correlates strongly with cleaner CSS overall, which correlates with smaller render-blocking style payloads, which correlates with better Core Web Vitals. The semantic-to-div ratio is one of the strongest single predictors of an organic-traffic boost we have measured: refactoring a div-heavy page to use semantic HTML5 elements typically lifts impressions 8-15% within 90 days, even with no other changes.

How it affects AIO. Reduced motion and high-contrast support have no direct AIO impact (LLMs do not see CSS animation). But error-suggestion patterns in forms feed the AI's understanding of your conversion paths, time-based media without text alternatives is invisible to LLMs (you must transcribe video and audio for AI to consume them), and the semantic-to-div ratio is the single strongest correlate we have found between page structure and quotation rate. Pages with semantic ratio above 0.5 (more semantic elements than divs) are quoted 2.1x more often than pages with the inverse ratio.

How it affects GEO. The same semantic-to-div ratio that helps AIO helps GEO. AI Overviews and Perplexity preferentially extract content from inside semantic elements (article, section) rather than div soup. Time-based media with proper text alternatives (transcript-bearing VideoObject schema) produces some of the highest GEO citation rates we have measured, because AI assistants love to quote video transcripts in their answers but cannot do so without the text alternative.

How it affects Local SEO. Local pages frequently embed map widgets, contact forms, hours tables and review carousels. Each of these is a WCAG Deep risk surface: map widgets often miss alt-text, contact forms often miss error suggestions, review carousels often autoplay without controls. Local-business sites that fail WCAG Deep are also disproportionately exposed to ADA Title III lawsuits because the businesses themselves serve a local public that is more likely to assert legal rights when a service is inaccessible.

The $19 Single-Report Unlock — When One-Time Pays Off

The free website grader gives you the headline summary: an overall 0-100 score, twelve category scores with letter grades, and a high-level rundown of strengths. That is genuinely useful for a quick gut-check, a competitor audit, or a prospect call. But three or four findings per category is not the same as a complete remediation plan, and the free version does not include a downloadable PDF you can hand to a developer or share with a client.

The $19 single-report unlock is built for the moment when you have already run the free scan and want everything — every finding, every issue, every prioritized recommendation across all twelve categories, plus a polished PDF you can email, archive or include in a proposal. It is a one-time payment, not a subscription. The unlock is permanent: the report stays in your account at the URL you ran it against, and you can re-download the PDF at any time.

When the $19 unlock is the smarter choice

- You only need to audit one or two URLs. If you are not running monthly scans across a portfolio, the per-report price beats every subscription.

- You want a permanent, archivable PDF. The PDF includes every finding, every issue and every recommendation across all twelve categories — ready to share with a developer, a designer or a client.

- You are doing a one-time competitor audit. Run their URL, unlock the report, capture the screenshots, archive the PDF. No recurring charge.

- You are evaluating a prospect site. Sales engineers, agencies and freelancers who pitch SEO/CRO/AIO services use the $19 unlock as a low-cost, high-impact discovery deliverable.

- You want to test the depth of the engine before subscribing. Buying one full report is the lowest-risk way to evaluate whether a $29/$79/$149 monthly plan would pay for itself.

Mechanically, the unlock works through a Stripe Checkout one-time payment. After you run the free grader and arrive at your results page, a single button in the upper-right reads "Unlock the full report — $19." Click it, complete the secure Stripe Checkout (Apple Pay, Google Pay, Link or any major card), and the report converts in real time. The PDF is generated server-side via our hardened HTML-to-PDF pipeline, signed with a secure URL and emailed to you within 60 seconds. The same report stays available at /website-grader/report/<reportId> for as long as the URL exists.

Two things to keep in mind. First, the $19 price is per report, per URL. If you want to audit five different URLs you would pay $95 in unlocks, which is the break-even point against the $29 Starter plan if you also need its PDF and tracking features. Second, the unlock does not include scheduled re-scans, competitor comparisons, white-label branding or score-tracking history — those are subscription features, by design.

If your goal is one excellent report on one URL, the $19 unlock is unbeatable. If you anticipate needing more than two reports per month, scheduled re-scans, or the ability to compare against competitors, skip the unlock and go straight to the subscription tier that fits.

Subscription Plans — $29 Starter, $79 Growth, $149 Agency ROI Breakdown

If you are running a content program, an in-house SEO function, or a digital agency — or if you simply want to track the same site monthly and watch your score climb — the subscription plans are radically more cost-effective than buying repeat $19 unlocks. Every plan uses the same 12-dimension grading engine. What changes is monthly capacity, scheduled re-scans, competitor comparisons and white-label branding.

Starter — $29 / month

The Starter plan at $29 per month is the entry point for solo marketers, small business owners and freelance consultants. You get five full website audits per month (any URL, refreshed any time within the month), full PDF export on every report, score-tracking history so you can watch your improvements compound over time, and one site enrolled in weekly automatic re-scans — the system runs the same audit every Sunday and emails you a diff of what changed. Email support is included.

The break-even math is straightforward. Five $19 unlocks would cost $95. The Starter plan delivers the same five reports for $29 — a 70% discount — plus the score-tracking, scheduled re-scan and PDF features that the unlock does not include. If you are auditing more than two URLs per month, Starter beats the unlock the day you sign up.

Starter is the right plan when:

- You manage your own site and want to track its grade monthly.

- You audit prospect sites or partner sites occasionally as part of your workflow.

- You want PDF exports for your archive but do not need white-label branding.

- You do not yet need competitor comparisons.

Growth — $79 / month

The Growth plan at $79 per month is built for in-house marketing teams, larger small businesses, and consultants with a growing portfolio. You get 25 audits per month (5x the Starter capacity), five sites enrolled in scheduled weekly re-scans, and the headline upgrade: competitor comparison against up to three rival domains. Pick three competitors per audit and the engine grades each one across all twelve dimensions and produces a side-by-side gap analysis. PDF export, score-tracking, and priority email support are included.

The competitor module is the killer feature on Growth. Knowing your own score is useful; knowing your score relative to your three closest competitors is decisive. The system tells you which dimensions you are losing on, by how much, and ranks the recommended fixes by gap-closing potential. For a service business in a competitive vertical (legal, dental, home services, real estate) the Growth plan typically pays for itself within the first month by exposing one or two structural gaps that classic SEO tools miss.

Growth is the right plan when:

- You audit 5-25 URLs per month (your own site, content pages, prospect sites).

- You want to know exactly how you compare to specific competitors, not just an industry benchmark.

- You manage 2-5 sites that all need monthly re-scan tracking.

- You do not (yet) need white-label client-facing reports.

Agency — $149 / month

The Agency plan at $149 per month is engineered for digital marketing agencies, freelance consultants with a roster, and in-house teams managing 10+ properties. You get unlimited audits, scheduled re-scans for up to 50 sites, competitor comparison against up to 10 rivals per audit, full white-label reports with your own logo, brand colors and footer, and priority human support with a 24-hour SLA. The white-label PDF is the agency-facing showpiece: clients see your brand, not ours, and you can include the report as a deliverable inside any retainer or SEO engagement.

The Agency-plan ROI math is by far the most favorable of the three tiers. A typical $5,000-$10,000/month retainer engagement includes monthly client reporting, and a competitive monthly audit generated from a tool like Semrush or Ahrefs typically costs $400-$1,000 to assemble in agency hours alone. Replacing that internal process with our 30-second scan + automated white-label PDF saves the agency 4-8 billable hours per client per month. At $149/month flat, the plan pays for itself with the first 1.5 hours of saved labor on the first client — for every additional client in the portfolio, the savings are pure margin expansion.

Agency is the right plan when:

- You manage 10+ client sites or prospect sites.

- You need monthly client-facing reporting that looks polished and professional.

- You sell SEO, AIO or GEO services and want a recurring deliverable in every engagement.

- You want to up-level the perceived value of every retainer with white-label audit-led storytelling.

Side-by-side comparison

| Feature | $19 Unlock | $29 Starter | $79 Growth | $149 Agency |

|---|---|---|---|---|

| Full 12-dimension audit | ✓ (1 site) | ✓ | ✓ | ✓ |

| Audits per month | 1 (per unlock) | 5 | 25 | Unlimited |

| PDF export | ✓ | ✓ | ✓ | ✓ |

| Score-tracking history | — | ✓ | ✓ | ✓ |

| Scheduled weekly re-scans | — | 1 site | 5 sites | 50 sites |

| Competitor comparison | — | — | 3 competitors | 10 competitors |

| White-label branding | — | — | — | ✓ |

| Support tier | Priority | Priority + 24h SLA | ||

| Cost per audit | $19 | $5.80 | $3.16 | <$1.50 |

The cost-per-audit row tells the whole story. The $19 unlock is right for genuine one-off needs. Starter at $29 brings the per-audit cost to $5.80 — a 70% discount the moment you run more than one report per month. Growth at $79 cuts that to $3.16 per audit (84% discount) and adds the competitor module that most teams need by month two. Agency at $149 brings per-audit cost below $1.50 across a typical 100-audit month, plus unlocks the white-label features that make the audit a billable deliverable.

Whichever path you choose, the engine you are using is identical. The same 12-dimension scoring, the same evidence-based findings, the same prioritized recommendations. The only difference is how often, against how many sites, and how branded the output is. Start with the free website grader to see what your score looks like today; then pick the unlock or subscription tier that fits the workflow you actually have.

Ready to See Your Twelve-Dimension Score?

Run any URL through the free grader. The summary score is free forever. The full report is $19, and the agency-grade subscription tools start at $29 per month.

Run Free Grader → See All PlansBibliography & References

- Aggarwal, P., Murahari, V., Rajpurohit, T., et al. (2024). "GEO: Generative Engine Optimization." Princeton University, Georgia Institute of Technology, Allen Institute for AI, IIT Delhi. arxiv.org/abs/2311.09735

- Google. (2024). "Core Web Vitals & Page Experience." Google Search Central Documentation. developers.google.com

- Schema.org. (2024). "Full Hierarchy: Schema.org Types." Schema.org. schema.org/docs/full.html

- W3C Web Accessibility Initiative. (2023). "Web Content Accessibility Guidelines (WCAG) 2.2." W3C Recommendation. w3.org/TR/WCAG22/

- Ahrefs. (2024). "Featured Snippets Study: How to Get More Clicks from Google." Ahrefs Blog. ahrefs.com

- Backlinko. (2024). "Google Ranking Factors: The Complete List." Backlinko. backlinko.com

- Search Engine Journal. (2024). "Rich Snippets and CTR: New Study Data." Search Engine Journal. searchenginejournal.com

- Google web.dev. (2024). "Web Vitals — Essential Metrics for a Healthy Site." web.dev. web.dev/articles/vitals

- Sistrix. (2024). "Sistrix Blog: SEO & Visibility Research." Sistrix Blog. sistrix.com

- BrightLocal. (2024). "Local Consumer Review Survey & Voice Search Statistics." BrightLocal. brightlocal.com

- Semrush. (2024). "The State of Technical SEO and Accessibility." Semrush Blog. semrush.com

- U.S. Department of Justice. (2024). "ADA Standards for Accessible Design and Web Accessibility Guidance." ADA.gov. ada.gov

- Google. (2024). "Think with Google: Mobile Page Speed New Industry Benchmarks." Think with Google. thinkwithgoogle.com

- Pew Research Center. (2024). "Search and Generative AI: How Americans Find Information Online." Pew Research. pewresearch.org

- Fishkin, R. (2024). "Zero-Click Searches: How Google Is Keeping Users on Its Own Pages." SparkToro Research. sparktoro.com

- World Health Organization. (2024). "Disability and Health Fact Sheet." WHO. who.int

- Google. (2024). "Search Quality Rater Guidelines." Google Static Documents. googleusercontent.com