Every time someone types a query into Google or Bing, a complex algorithm evaluates hundreds of ranking factors to determine which pages deserve the top spots. Understanding these signals — and knowing which ones boost your visibility versus which ones can tank your rankings — is the difference between page one dominance and digital obscurity. In this comprehensive guide, we have cataloged over 210 confirmed and suspected ranking factors used by the world’s two largest search engines, ranked by their positive or negative impact on your website’s search performance.

What follows is the most complete, research-backed analysis of search engine optimization ranking factors available in 2026. Whether you are a seasoned SEO professional, a business owner trying to improve organic traffic, or a digital marketer looking to understand what really moves the needle, this guide will give you the actionable intelligence you need.

🔍 Interactive Search Engine Ranking Factors Database

Explore 210+ confirmed and suspected ranking factors used by Google & Bing — sortable, filterable, and searchable

| # | Ranking Factor | Category | Impact | Weight | Engine |

|---|

Understanding Search Engine Ranking Factors in 2026

Search engine ranking factors are the criteria that Google, Bing, and other search engines use to evaluate and rank web pages in their search results. Google alone is believed to use over 200 distinct signals in its algorithm, though the exact number and their precise weights remain closely guarded secrets. What we do know comes from a combination of official Google documentation, patent filings, the landmark 2024 API leak, controlled experiments by SEO researchers, and sworn testimony from Google executives.

Not all ranking factors carry equal weight. According to research from First Page Sage, consistent publication of satisfying content accounts for roughly 23% of the algorithm’s weight, while backlinks contribute approximately 13% and keyword usage in title tags around 14%. These figures are estimates based on extensive correlation studies and should be treated as directional rather than absolute. What matters most is understanding the relative importance of each factor category and how they interact with one another.

The landscape of search engine optimization has shifted dramatically in recent years. Google’s AI Optimization systems like RankBrain, BERT, and MUM have fundamentally changed how the algorithm interprets queries and evaluates content. Meanwhile, the 2024 API documentation leak confirmed what many SEO professionals had long suspected: user behavior signals, including click data, dwell time, and pogo-sticking, play a far more significant role in rankings than Google had publicly acknowledged.

What Exactly Are Ranking Factors?

A ranking factor is any signal, characteristic, or metric that a search engine’s algorithm considers when determining the order of search results. These factors fall into several broad categories:

- Content factors evaluate the quality, relevance, depth, and freshness of your page content

- Technical factors assess your website’s infrastructure, speed, security, and crawlability

- Backlink factors measure the quantity, quality, and relevance of external links pointing to your site

- User experience factors track how real users interact with your pages in search results

- On-page factors look at how well individual pages are optimized for target keywords

- Domain factors consider the overall authority, age, and trustworthiness of your domain

- Local factors are specific to location-based searches and map results

- Social and brand factors gauge your brand’s online presence and reputation

Each factor can have a positive impact (boosting your rankings), a negative impact (hurting your rankings), or a context-dependent impact where the effect varies based on how the factor is implemented. For example, backlinks are powerfully positive when they come from authoritative, relevant websites, but they become negatively impactful when they originate from spammy link farms.

The 2024 Google API Leak: What We Now Know for Certain

In May 2024, a trove of internal Google Search API documentation was accidentally made public, revealing over 14,000 ranking features and modules. Google confirmed the documents were authentic, though they cautioned that the information might be “out of context.” For the SEO community, this leak was seismic — it confirmed several long-suspected ranking mechanisms that Google had publicly denied.

NavBoost: Google’s Click-Based Ranking System

Perhaps the most significant revelation was the confirmation of NavBoost, a system that processes 13 months of user click data to refine search rankings. Google VP Pandu Nayak confirmed NavBoost as “one of the important signals” during sworn testimony. The system classifies clicks into “good clicks,” “bad clicks,” and “last longest clicks” to assess user satisfaction. This means that when someone searches for a term, clicks your result, and stays on your page for an extended period, that is a powerful positive signal. Conversely, if users consistently click your result and immediately bounce back to Google — a behavior known as pogo-sticking — it sends a strong negative signal about your page’s relevance.

Google has access to far richer user signals than most people realize, including search queries, click behavior in search results, dwell time, pogo-sticking patterns, and overall engagement metrics on pages. The API leak confirmed that these signals are not merely observed but are actively used through systems like NavBoost to promote or demote pages in the rankings.

Site Authority Is Real

The leaked documentation referenced a “siteAuthority” metric, directly contradicting Google’s repeated public claims that it does not use domain authority as a ranking signal. The API also revealed “Host NSR” (Host-Level Site Rank), which computes site-level rankings based on different sections of a domain. This confirms that building overall domain authority through strategic link building remains critically important.

Chrome Browser Data in Rankings

Another bombshell: the documents revealed that data from Google’s Chrome browser influences search rankings. This includes metrics like time on page, clicks, browsing patterns, and a metric called “topURL” — the most clicked page according to Chrome data. Google representatives had previously denied using Chrome data for ranking purposes.

The Sandbox Effect for New Sites

The leaked documentation confirmed the existence of a “sandbox effect” where new websites temporarily face suppressed rankings. Regulated by a “hostAge” attribute, this period allows Google to verify the reliability and trustworthiness of new domains before giving them full ranking potential. For new websites, this means patience and consistent content publication are essential during the early months.

Content Quality Ranking Factors: The Foundation of Rankings

Content quality is the single most influential category of ranking factors, collectively accounting for roughly 30–40% of the algorithm’s total weight. Google’s mission is to organize the world’s information and make it universally accessible and useful — and the quality of your content determines whether Google views your pages as worthy of that mission.

Content Relevance and Search Intent Alignment

Before anything else, your content must match the searcher’s intent. Google uses sophisticated AI systems including RankBrain, BERT, and MUM to understand what users actually want when they type a query. There are four primary types of search intent: informational (learning about a topic), navigational (finding a specific website), commercial (researching before purchasing), and transactional (ready to buy or take action).

If someone searches “search engine ranking factors,” they want a comprehensive educational resource — not a sales page for SEO services. Google’s algorithms are incredibly adept at distinguishing between these intent types and will demote pages that fail to align with what users actually want. This is why creating high-quality content marketing that genuinely serves the reader is so critical.

Content Depth, Comprehensiveness, and Topical Authority

The API leak revealed a concept called SiteFocusScore, which evaluates how closely a website adheres to a specific topic, and SiteRadius, which measures deviation from that core topic. Websites that develop deep expertise in a focused niche — what SEO professionals call “topical authority” — receive significant ranking advantages. This is why a website dedicated to digital marketing that publishes dozens of in-depth articles about SEO, PPC advertising, and social media marketing will outrank a generalist website that covers everything from cooking to car repair.

Content that thoroughly covers a topic from every angle, answers related questions, and provides unique insights signals to Google that your page is the definitive resource for that query. The leaked OriginalContentScore metric also confirms that Google assigns higher value to original research, proprietary data, and first-hand experience.

E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness

Google’s E-E-A-T framework is not a single ranking factor but rather a set of principles that inform how Google’s quality raters and algorithms evaluate content quality. The leaked API documentation confirmed that Google stores author information as entities and assigns expertise scores based on credentials, publication history, and mentions across authoritative sources. Demonstrating E-E-A-T involves:

- Having recognized authors with verifiable credentials write your content

- Including author bylines, bios, and links to authoritative profiles

- Citing reputable sources and linking to original research

- Showing first-hand experience with the topics you cover

- Building a reputation through consistent, accurate, and helpful content

This matters especially for YMYL (Your Money or Your Life) topics like health, finance, and legal advice, where Google applies extra scrutiny to ensure that top-ranking content is trustworthy and accurate.

Content Freshness and Update Frequency

Google’s Query Deserves Freshness (QDF) system detects when a topic suddenly spikes in interest and temporarily boosts newer content for those queries. But freshness matters beyond trending topics, too. The API documentation revealed that content not regularly updated receives lower storage priority in Google’s indexing systems. Websites that update their content at least annually tend to maintain or improve their SERP positions, while stagnant content gradually loses ground.

The magnitude and frequency of updates also matter. A superficial change to a publication date without meaningful content improvements will not fool the algorithm. Google tracks the actual scope of content changes and rewards pages where updates add genuine value — new data, updated statistics, additional sections, or corrected information.

Content Structure, Readability, and Multimedia

Well-structured content with clear heading hierarchies (H1, H2, H3), bullet points, numbered lists, tables, and visual breaks receives a ranking advantage because it improves user engagement metrics. When users can quickly scan and find the information they need, they spend more time on the page, visit additional pages, and are less likely to pogo-stick back to the search results.

Multimedia content — images, videos, infographics, and interactive elements — also contributes positively. The leaked ChardScores metric suggests that Google estimates the “effort” behind content creation, with unique images, embedded videos, and interactive tools boosting page quality scores. Simply put, a wall of text will never outperform well-formatted content that uses visual aids to enhance understanding.

Technical SEO Ranking Factors: The Infrastructure Behind Rankings

Technical SEO factors form the foundation upon which all other ranking signals are built. You can have the best content in the world, but if Google cannot crawl, index, and render your pages efficiently, that content will never reach its ranking potential. Think of technical SEO as the infrastructure of your website — invisible to most visitors but absolutely essential for performance.

Core Web Vitals and Page Speed

Google’s Core Web Vitals — Largest Contentful Paint (LCP), Interaction to Next Paint (INP, which replaced First Input Delay), and Cumulative Layout Shift (CLS) — are confirmed ranking factors that measure real-world user experience. LCP measures loading performance: your main content should load within 2.5 seconds. INP measures interactivity: pages should respond to user input within 200 milliseconds. CLS measures visual stability: page elements should not shift unexpectedly during loading.

Pages that pass all three Core Web Vitals thresholds receive a modest ranking boost, while pages with poor performance can be demoted. According to Google’s own data, users are 24% less likely to abandon a page that meets Core Web Vitals thresholds. For businesses investing in custom web development, optimizing these metrics should be a top priority.

Mobile-First Indexing

Since 2021, Google has used mobile-first indexing, meaning the mobile version of your website is what Google primarily crawls and indexes. If your site does not provide a good mobile experience, your rankings will suffer across all devices. This includes responsive design, readable text without zooming, adequate tap targets, and fast mobile loading speeds. Bing, notably, does not implement mobile-first indexing and maintains a single index for both mobile and desktop.

HTTPS and Website Security

HTTPS (SSL/TLS encryption) is a confirmed ranking signal for both Google and Bing. Google Chrome flags non-HTTPS sites as “Not Secure,” which can devastate click-through rates. Beyond the direct ranking boost, HTTPS protects user data and builds trust — both of which contribute to better engagement metrics and, consequently, better rankings.

Crawlability, Indexing, and Site Architecture

Search engines need to be able to discover and process your pages efficiently. Key technical factors include a properly configured robots.txt file, a comprehensive XML sitemap, clean URL structures, logical site architecture (flat rather than deep), canonical tags to prevent duplicate content issues, and proper handling of JavaScript rendering. The API leak confirmed that Google uses OnSiteProminence to evaluate a page’s significance within a website by simulating traffic flow from the homepage. Pages that are deeply buried with few internal links pointing to them will receive lower prominence scores.

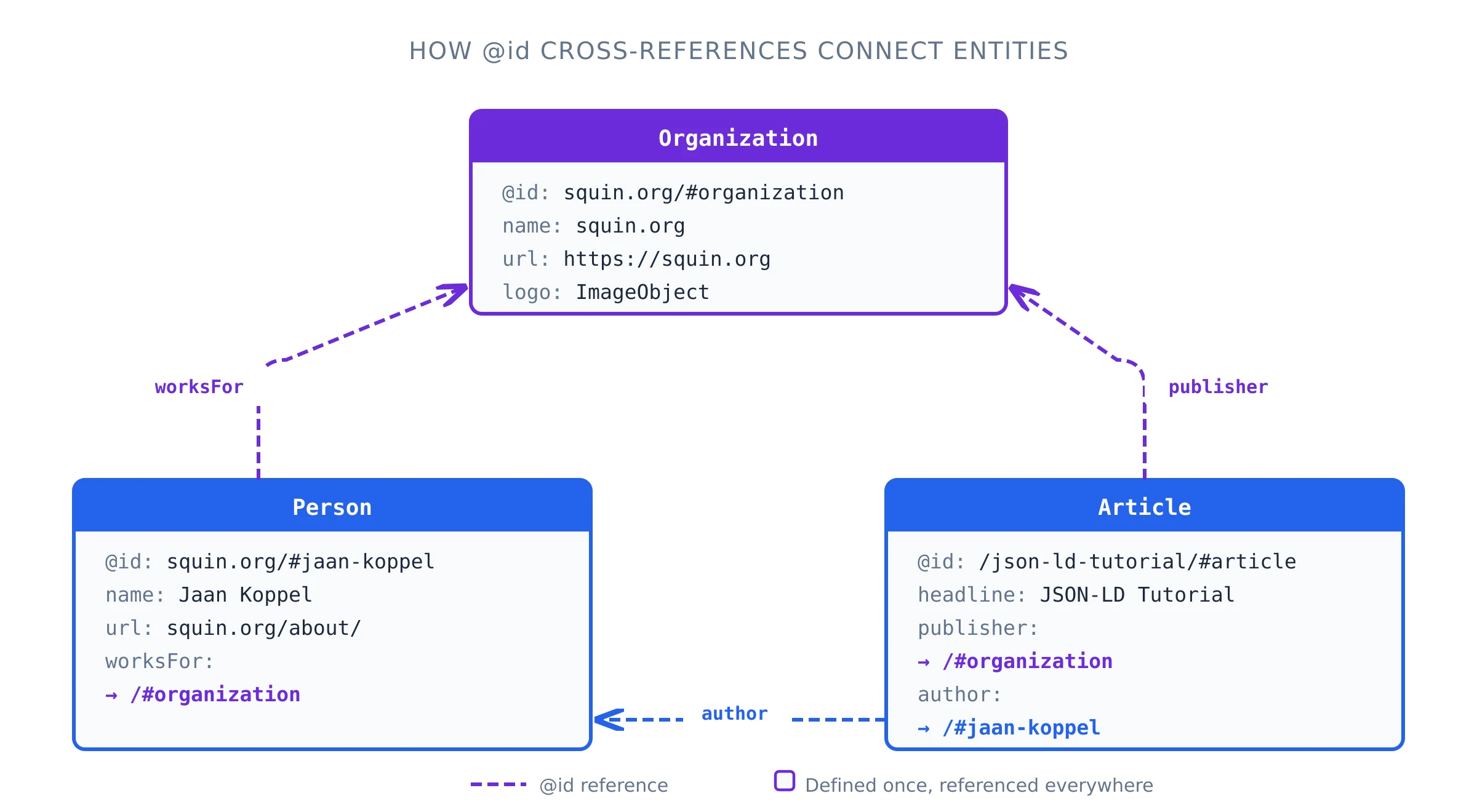

Structured Data and Schema Markup

While Schema.org structured data is not a direct ranking factor in the traditional sense, it significantly impacts how your pages appear in search results. Rich results (star ratings, FAQs, how-to steps, product information) dramatically increase click-through rates, which in turn sends positive user engagement signals through NavBoost. Structured data also helps AI systems understand and cite your content, making it essential for generative engine optimization.

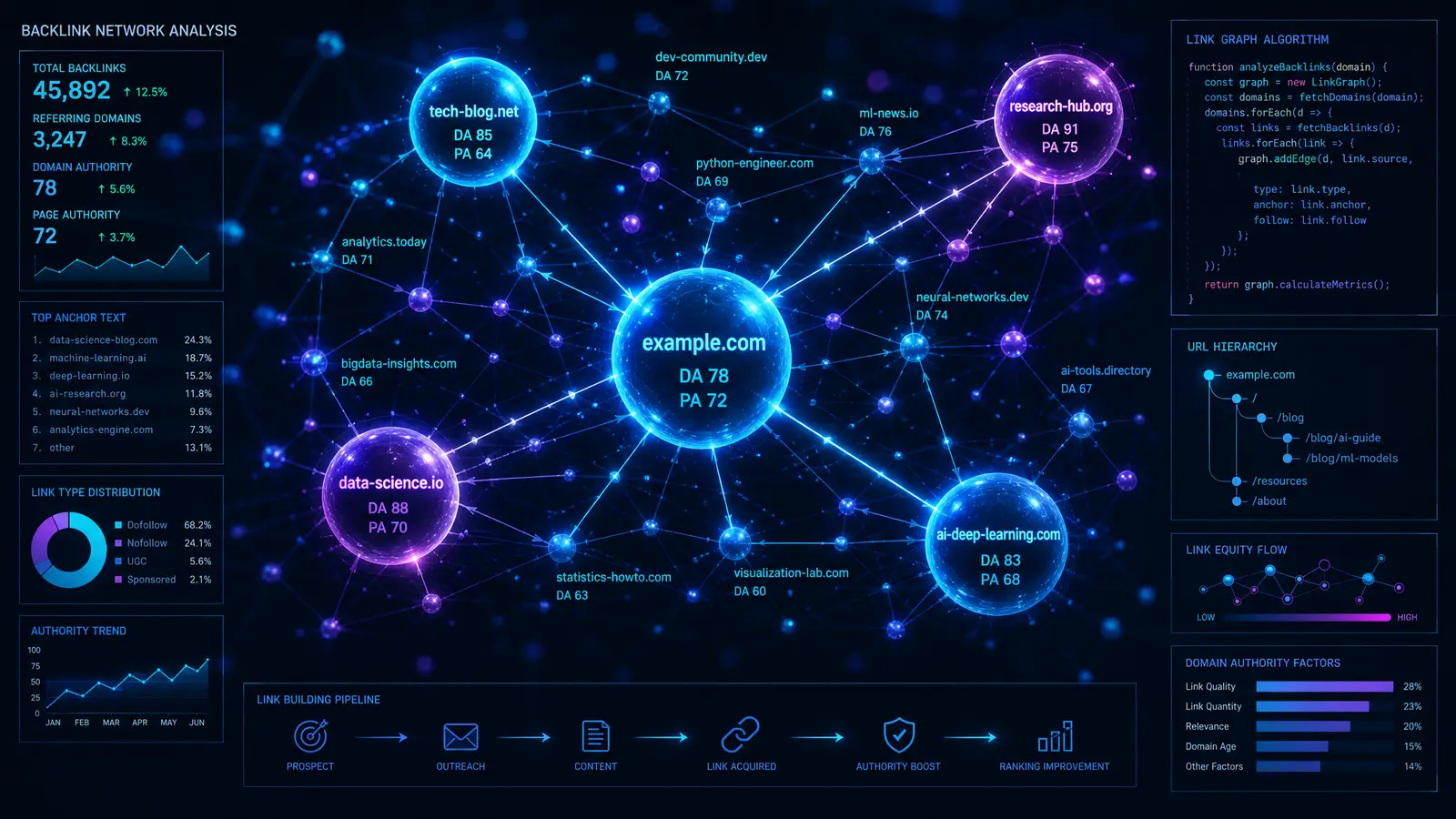

Backlink and Off-Page Ranking Factors

Backlinks remain one of the most powerful ranking signals, though their relative weight has decreased slightly in recent years as Google has developed more sophisticated ways to evaluate content quality directly. According to First Page Sage’s analysis, backlinks now account for approximately 13% of the ranking algorithm, down from 15% in 2024. However, a 13% share still makes them the third most important factor category overall.

Link Quality Over Quantity

The days of acquiring thousands of low-quality backlinks are long gone. Google’s Penguin algorithm specifically targets unnatural link profiles, and the API leak confirmed that Google categorizes links into different quality tiers based on click data. A single contextual backlink from a high-authority, relevant website carries more ranking weight than hundreds of links from directories, blog comments, or irrelevant sources. Our comprehensive guide on how to attract quality backlinks covers the strategies that actually work in 2026.

The most valuable backlinks share several characteristics: they come from websites with high domain authority, they are contextually relevant (the linking page covers a related topic), they are editorially placed (not paid or exchanged), and they use natural anchor text that includes a mix of branded, generic, and keyword-relevant phrases.

Number and Diversity of Referring Domains

Having many links from a single domain provides diminishing returns. Google values diversity in your backlink profile — links from many different domains, across different types of websites (blogs, news sites, educational institutions, government sites, industry directories), signal broad recognition and authority. The API leak also revealed that links from .edu and .gov domains carry additional weight, likely because these domains undergo institutional vetting processes that make them more trustworthy by default.

Anchor Text Relevance and Distribution

Anchor text — the clickable text of a link — provides Google with context about what the linked page is about. A natural anchor text distribution includes branded terms (your company name), generic phrases (“click here,” “learn more”), naked URLs, and keyword-relevant phrases. Over-optimizing anchor text with exact-match keywords is a well-known trigger for Google Penguin penalties, so maintaining a natural profile is essential.

Link Velocity and Link Age

Link velocity refers to the rate at which your website acquires new backlinks. A steady, natural increase in quality backlinks signals organic growth, while sudden spikes can trigger algorithmic scrutiny. The API documentation also confirmed that older backlinks carry more weight, suggesting that Google trusts links that have stood the test of time over newly acquired ones.

Internal Linking and On-Site Prominence

Internal links help distribute ranking power (often called “link equity” or “PageRank”) throughout your website. The API leak revealed an OnSiteProminence metric that simulates traffic flow from the homepage and other high-traffic pages. Pages that receive more internal links from important pages earn higher prominence scores. A well-planned internal linking strategy using topic clusters and pillar content architecture can significantly boost rankings for your most important pages.

User Experience and Behavioral Signals: Google’s Secret Weapon

User experience signals are arguably the most underappreciated category of ranking factors. The 2024 API leak dramatically shifted our understanding of their importance, confirming that Google tracks and uses real user behavior data far more extensively than previously admitted. These signals collectively represent one of the most powerful forces shaping modern search rankings.

Click-Through Rate (CTR) from Search Results

Your organic click-through rate — the percentage of people who see your listing in search results and actually click on it — is a significant ranking signal processed by NavBoost. When your result consistently receives more clicks than competing results for the same query, Google interprets this as a strong relevance signal. Optimizing your title tags and meta descriptions for compelling, accurate click bait (not misleading clickbait) is one of the most effective ways to improve rankings without changing your actual content.

Dwell Time: How Long Users Stay

Dwell time is the duration a user spends on your page after clicking from search results before returning to the SERP. The API leak confirmed that NavBoost tracks the “last longest click” in a search session — the final result a user clicks on and dwells on for a significant period. This interaction is interpreted as the ultimate signal of a successfully completed search task. Creating content that is engaging, comprehensive, and genuinely useful naturally increases dwell time. Formatting techniques like table of contents navigation, expandable sections, interactive elements, and embedded media all contribute to longer engagement times.

Pogo-Sticking: The Silent Ranking Killer

Pogo-sticking occurs when a user clicks on a search result, quickly returns to the SERP because the result failed to meet their needs, and then clicks a different result. Google’s NavBoost system classifies these as “bad clicks” and tracks them as negative signals. While distinct from bounce rate (which measures single-page visits regardless of source), pogo-sticking specifically indicates that your page failed to satisfy the search query.

Common causes of pogo-sticking include misleading title tags that promise more than the content delivers, slow page loading that causes users to abandon the page, poor design that undermines credibility, content that fails to answer the query quickly enough, and intrusive pop-ups or ads that frustrate users. Reducing pogo-sticking requires aligning your title tags, meta descriptions, and content so that users find exactly what they expected when they clicked.

Direct and Repeat Traffic

The API leak confirmed that Google monitors direct traffic (users who type your URL directly) and repeat visits as signals of site quality and brand strength. Websites that generate substantial direct traffic are perceived as established brands that users actively seek out, which contributes to higher “siteAuthority” scores. Building brand awareness through social media marketing, email campaigns, and offline marketing efforts indirectly supports your search rankings.

On-Page SEO Ranking Factors

On-page SEO refers to the optimization of individual web pages to rank higher and earn more relevant traffic. These factors are among the most directly controllable elements in your SEO strategy.

Title Tag Optimization

The title tag remains one of the most important on-page ranking factors. The API leak revealed a TitleMatchScore that measures how closely a page title matches a search query. While the relative weight of title tags has decreased slightly in recent years (now approximately 14% according to First Page Sage), they function as a prerequisite for ranking: pages without relevant keywords in the title tag rarely rank for competitive queries. Best practices include placing the primary keyword near the beginning, keeping titles under 60 characters to avoid truncation, and making them compelling enough to drive clicks.

Heading Hierarchy (H1 through H6)

Proper heading hierarchy signals content structure and relevance to search engines. Your H1 tag should contain your primary keyword and clearly communicate the page’s main topic. H2 tags should mark major sections, and H3–H6 tags should denote subsections within those. This hierarchical structure helps search engines understand the topical organization of your content and improves accessibility for screen readers.

Strategic Keyword Placement

Beyond the title tag, keywords should appear naturally in several strategic locations: the first 100 words of the content, the H1 tag, at least one H2 subheading, the meta description, the URL slug, and image alt text. However, keyword stuffing — the excessive and unnatural insertion of keywords — is one of the most heavily penalized on-page factors. Modern SEO requires a natural writing style that incorporates LSI (Latent Semantic Indexing) keywords and semantic variations rather than repeating the same exact-match phrase.

Image Optimization

Every image on your page should include descriptive alt text that helps search engines understand the image content and improves accessibility. Images should be properly compressed (formats like WebP and AVIF are recommended) and appropriately sized to avoid unnecessary page weight. File names should be descriptive rather than generic (e.g., “search-engine-ranking-factors-guide.webp” rather than “IMG_2847.jpg”). Image optimization contributes both to page speed and to image search visibility.

Outbound Link Quality and Relevance

The quality and relevance of your outbound links — the links you include in your content pointing to external websites — serve as a signal of content quality. When you link to authoritative, relevant sources like academic papers, government databases, industry publications, and established news organizations, you signal to Google that your content is well-researched and positioned within a credible information ecosystem. The API leak revealed a factor that evaluates the quality of pages you link to, reinforcing the importance of choosing your outbound link targets carefully.

Conversely, linking to low-quality, spammy, or irrelevant websites can hurt your rankings. Excessive outbound links can dilute your page’s authority and confuse search engines about your content’s primary topic. A good rule of thumb is to include outbound links where they genuinely add value for the reader — supporting claims with evidence, providing additional reading for complex topics, or citing original research.

URL Structure and Optimization

Clean, descriptive URLs that include relevant keywords provide a small but meaningful ranking advantage. Google has confirmed that the words in a URL help understand what the page is about, particularly when other signals are limited. Best practices include keeping URLs short (under 60 characters), using hyphens to separate words, including the primary keyword, avoiding unnecessary parameters and session IDs, and maintaining a logical hierarchy that reflects your site architecture.

Both Google and Bing factor URL structure into their ranking algorithms, though Bing places slightly more emphasis on exact-match keywords in URLs. For example, a URL like /blog/search-engine-ranking-factors-guide provides clearer topical signals than /blog/post-12847.

Meta Description Optimization

While Google has stated that meta descriptions are not a direct ranking factor, this claim requires nuance. Meta descriptions significantly influence click-through rates, which NavBoost does track as a ranking signal. A compelling meta description that accurately summarizes the page content and includes relevant keywords can dramatically improve CTR. For Bing, meta descriptions carry even more weight — Bing has confirmed that meta descriptions are a ranking factor in their algorithm, making them doubly important for cross-engine optimization.

Best practices for meta descriptions include keeping them between 150–160 characters, incorporating the primary keyword naturally, including a clear value proposition or call to action, accurately reflecting the page content to reduce pogo-sticking, and using active voice and compelling language. Every page on your site should have a unique meta description that is specifically crafted for that page’s content and target audience.

Domain Authority and Trust Signals

Domain-level factors influence the ranking potential of every page on your website. While Google has historically denied using “domain authority” as a ranking factor, the 2024 API leak proved otherwise with the documented “siteAuthority” metric.

Understanding Domain Authority

Domain authority is a composite measure of your website’s overall trustworthiness and ranking power. It is influenced by the quality and quantity of your backlink profile, your content quality and topical relevance, your site’s age and history, user behavior signals, and brand recognition. Building domain authority is a long-term process that requires consistent investment in high-quality content, strategic link building, and positive user experiences. There are no shortcuts.

Domain Age and History

The API leak confirmed a “hostAge” attribute that influences rankings, with newer domains facing a temporary “sandbox” period. While domain age itself is not a powerful ranking factor, it correlates strongly with other trust signals: older domains tend to have more backlinks, more content, and more established brand recognition. What matters more is domain history — a domain with a clean record that has never been penalized or associated with spam will have an advantage over one with a troubled past.

Trust-Building Pages

Having essential trust pages — an About page, a Contact page with verifiable information, a Privacy Policy, and Terms of Service — signals to both search engines and users that your website is a legitimate operation. The API documentation suggests these pages contribute to overall trust scoring, particularly for YMYL topics.

Local SEO Ranking Factors

Local SEO ranking factors determine which businesses appear in Google’s Local Pack, Maps results, and local organic listings. For businesses serving specific geographic areas, these factors can be even more important than traditional organic ranking signals.

Google Business Profile Optimization

Your Google Business Profile (formerly Google My Business) is the single most important factor for local search rankings. A fully optimized profile includes accurate business information, comprehensive category selection, regular posts, photos, service descriptions, and active review management. Google uses the information in your Business Profile as a primary data source for local search results, making it essential that every field is completed accurately.

NAP Consistency and Local Citations

NAP stands for Name, Address, and Phone Number — the three critical pieces of information that must be consistent across every online mention of your business. Inconsistent NAP information confuses search engines and can significantly hurt your local rankings. Local citations (mentions of your business on directories, review sites, and social platforms) reinforce your business’s legitimacy and geographic relevance.

Reviews and Online Reputation

The quantity, quality, velocity, and diversity of your online reviews directly impact local search rankings. Google’s local algorithm weighs review signals heavily, including the number of reviews, the average rating, the recency of reviews, and the presence of relevant keywords in review text. Actively managing and responding to reviews demonstrates engagement and builds trust with both users and algorithms.

Proximity to Searcher

Geographic proximity is one of the strongest local ranking factors. When someone searches for a service “near me,” Google heavily weights the physical distance between the searcher and the business. While you cannot change your location, you can optimize for surrounding areas through location-specific landing pages, local content, and geographic keywords.

Local Content and Geo-Targeted Landing Pages

Creating location-specific content is one of the most effective local SEO strategies. This includes developing individual landing pages for each geographic area you serve, publishing blog posts about local events, news, and industry developments, creating case studies featuring local clients, and building local resource guides. Each location page should include the city or region name in the title, headings, meta description, and body content, along with locally relevant information that demonstrates genuine connection to the area.

For businesses operating across multiple locations, our service areas pages demonstrate how to create comprehensive, SEO-optimized location content that targets specific geographic markets while maintaining consistent brand messaging. Local content signals help search engines understand exactly where your business operates and which geographic queries your pages should rank for.

Local Link Building and Community Engagement

Local backlinks from community organizations, local business directories, chambers of commerce, local news outlets, and regional blogs carry significant weight in local search algorithms. These links signal geographic relevance and community integration that generic backlinks cannot replicate. Strategies for building local links include sponsoring local events, participating in community organizations, contributing expert commentary to local media, partnering with complementary local businesses, and getting listed in local business directories and industry associations.

Social Signals and Brand Factors

The relationship between social media and search rankings is one of the most debated topics in SEO, and Google and Bing take starkly different approaches.

Google’s Position on Social Signals

Google has consistently stated that social signals (likes, shares, comments) are not direct ranking factors. However, the relationship is more nuanced than a simple “no.” Social media activity drives traffic to your website, which generates the user behavior signals that NavBoost does track. Social sharing increases the likelihood of earning natural backlinks. Brand mentions on social platforms contribute to brand recognition and branded search volume, which the API leak confirmed are positive ranking signals. So while social media may not directly influence Google’s algorithm, it creates a cascade of secondary effects that absolutely do.

Bing’s Embrace of Social Signals

Bing takes a fundamentally different approach. The search engine has explicitly acknowledged that social signals are a ranking factor in its algorithm. Content that is frequently shared on Facebook, Twitter/X, LinkedIn, and Pinterest is more likely to rank higher on Bing. Bing can also source data from social media to populate its SERP knowledge graphs. For businesses that want to improve their Bing rankings specifically, investing in social media engagement provides a direct ranking benefit.

Brand Mentions and Branded Searches

Both Google and Bing treat brand signals as important ranking indicators. Unlinked brand mentions — references to your brand name without a hyperlink — are tracked and used as a trust signal. The volume of branded searches (people searching for your company name) indicates brand strength and user intent. Building a strong brand through consistent messaging, SEO-optimized press releases, media coverage, and community engagement creates a positive feedback loop that supports organic rankings.

Bing-Specific Ranking Factors: How Bing Differs from Google

While Google dominates global search market share, Bing powers a significant portion of searches — particularly through Microsoft Edge, Windows Search, and its integration with AI tools like Copilot. Bing’s algorithm differs from Google’s in several important ways that savvy marketers should understand.

Bing’s Emphasis on Exact-Match Keywords

While Google’s semantic understanding has evolved to interpret context and synonyms, Bing still places significantly more weight on exact-match keywords. This means including your target keywords precisely in title tags, meta descriptions, H1 tags, and body content matters more for Bing rankings. Bing also gives more weight to meta descriptions as a ranking signal, unlike Google which uses meta descriptions primarily for display purposes.

Social Signals as a Direct Ranking Factor

As discussed earlier, Bing openly incorporates social media engagement into its ranking algorithm. Businesses with active social media presences, high engagement rates, and content that is frequently shared on social platforms will see direct ranking benefits on Bing. This is one of the most significant differences between the two search engines.

Multimedia Content Optimization

Bing places greater emphasis on multimedia content — images, videos, and audio — than Google does. Bing’s crawlers are better at processing multimedia elements, and its search results often feature multimedia content more prominently. Optimizing your images, videos, and other media with proper metadata and descriptions provides a stronger ranking benefit on Bing.

Domain Age and Authority Preferences

Bing tends to favor older domains with established authority more strongly than Google. It also shows a more pronounced preference for .edu and .gov top-level domains. For newer websites, this means gaining traction on Bing may take longer, but it also means that established websites with clean domain histories can enjoy disproportionate ranking advantages.

IndexNow Protocol

Bing developed the IndexNow protocol, which allows websites to instantly notify search engines when content is added, updated, or deleted. This leads to significantly faster indexing compared to waiting for crawlers to discover changes organically. Implementing IndexNow is a simple but effective way to ensure Bing always has the freshest version of your content indexed.

Negative Ranking Factors and Google Penalties

Understanding what hurts your rankings is just as important as knowing what helps. Negative ranking factors can result from intentional manipulation (black-hat SEO), unintentional mistakes (technical errors), or external attacks (negative SEO). Google penalties fall into two categories: algorithmic penalties applied automatically by systems like Panda and Penguin, and manual penalties issued by human reviewers.

Content-Related Penalties

The most common content-related penalties include thin content (pages with insufficient depth or value), duplicate content (substantial portions copied from other sources or repeated across your own site), keyword stuffing (unnatural overuse of target keywords), auto-generated or AI spam content (content produced solely for search engines without human oversight), and scraped content (content copied from other websites without adding value).

Google’s Helpful Content System, introduced in 2022 and updated regularly, specifically targets websites that publish content primarily for search engine ranking rather than for genuine user benefit. This system can apply site-wide demotions, meaning that even your high-quality pages can suffer if your site contains a significant amount of low-quality content.

Link-Related Penalties

Google’s Penguin algorithm targets unnatural link profiles, including purchased links, private blog network (PBN) links, link schemes, excessive reciprocal linking, and links from link farms. The API leak confirmed that Google categorizes links into quality tiers and can detect manipulative patterns. Recovery from link-related penalties typically involves auditing your backlink profile, disavowing toxic links through Google Search Console, and building new, high-quality links to replace the toxic ones.

Technical and Security Penalties

Technical issues that can trigger penalties include cloaking (showing different content to users and search engines), sneaky redirects, hacked websites serving malware, misleading structured data (marking up content that does not match the page), and aggressive interstitials (pop-ups that block content on mobile devices). A hacked website is one of the most severe negative factors, as Google will actively warn users and suppress rankings until the security issue is resolved.

Manual Actions vs. Algorithmic Demotions

Manual actions are penalties applied by human Google reviewers and appear in Google Search Console under the “Manual Actions” section. Algorithmic demotions happen automatically when your site triggers negative signals in Google’s algorithms. The key difference is that manual actions require a formal reconsideration request after fixing the issues, while algorithmic recovery happens automatically (but often slowly) once the underlying problems are resolved.

Penalty Recovery Strategies and Timeline

Recovering from a Google penalty requires a systematic approach that addresses the root cause, documents the fixes, and demonstrates commitment to quality. For manual penalties, the recovery process involves identifying the specific violation listed in Google Search Console, implementing comprehensive fixes across the entire site, documenting every action taken in detail, and submitting a reconsideration request that clearly explains what went wrong, what was fixed, and what measures have been put in place to prevent recurrence. Google typically reviews reconsideration requests within two to four weeks.

For algorithmic demotions, recovery happens automatically once Google re-evaluates your site during a subsequent algorithm update. This can take anywhere from weeks to months, and there is no reconsideration request process. The key is to make substantial, genuine improvements to your site’s quality rather than making minimal changes and hoping the algorithm will forgive you. Some algorithmic penalties, like those from the Helpful Content System, require significant improvements across the entire site before recovery is possible.

Prevention is always better than recovery. Invest in white-hat SEO practices from the start: create genuinely helpful content, build links through legitimate outreach and relationship building, maintain clean technical infrastructure, and regularly audit your site for potential issues. The short-term gains from manipulative tactics are never worth the long-term damage of a penalty.

Defending Against Negative SEO Attacks

Negative SEO — deliberate attempts by competitors or malicious actors to damage your search rankings — remains a concern despite Google’s assurances that their algorithms can identify and ignore most attacks. Common negative SEO tactics include building thousands of spammy backlinks to your site, scraping and republishing your content across the web, creating fake negative reviews, and hacking your website to inject malicious content or redirects.

To defend against negative SEO, establish a monitoring routine that includes regular backlink audits using tools like Ahrefs or SEMrush, Google Alerts for your brand name and key content, Google Search Console monitoring for unusual patterns, and security monitoring for your website. If you detect an attack, act quickly: disavow toxic backlinks, file DMCA takedown requests for scraped content, report fake reviews to the platform, and secure your website against further intrusion. A strong, well-established website with a diverse natural backlink profile is inherently more resistant to negative SEO attacks because any manipulative additions are statistically insignificant compared to the legitimate profile.

Ranking Factors for AI Search: GEO and AIO

The rise of AI-powered search experiences — Google’s AI Overviews, Bing’s Copilot, Perplexity, ChatGPT, and other large language models — has introduced an entirely new dimension to search optimization. Generative Engine Optimization (GEO) and AI Optimization (AIO) focus on ensuring your content is discovered, cited, and recommended by AI systems.

GEO: Optimizing for AI-Generated Answers

When AI systems generate responses to user queries, they draw from a knowledge base of web content. The factors that influence whether your content is cited include content authority and trustworthiness (E-E-A-T signals), content structure (clear headings, concise paragraphs, direct answers), factual accuracy and source attribution, schema markup that helps AI systems understand your content, and brand authority and recognition.

Research shows that websites with comprehensive Schema.org structured data are up to three times more likely to be cited in AI-generated responses. This is because structured data provides machine-readable context that helps AI systems accurately interpret and reference your content.

AIO: Optimizing Content for AI Understanding

AI Optimization goes beyond traditional SEO to ensure your content is optimally structured for AI comprehension. Key AIO factors include natural language clarity (writing in clear, unambiguous sentences that AI systems can easily parse), comprehensive topic coverage (addressing questions from multiple angles), question-and-answer format (directly answering common questions), data presentation (using tables, statistics, and structured formats that AI can extract), and entity optimization (clearly identifying and contextualizing people, places, organizations, and concepts).

E-E-A-T and Trust Factor Deep Dive

Google’s E-E-A-T framework — Experience, Expertise, Authoritativeness, and Trustworthiness — has become one of the most significant quality evaluation systems in modern search. While E-E-A-T itself is not a single algorithmic factor, the 2024 API leak confirmed that Google systematically measures these signals through multiple mechanisms.

Experience: Demonstrating First-Hand Knowledge

The “Experience” component, added by Google in late 2022, emphasizes content created by people who have genuine first-hand experience with the subject matter. Google’s algorithms look for signals of personal experience including first-person accounts, original photography, proprietary data, case studies based on real work, and demonstrations of practical knowledge that could only come from direct involvement.

Expertise: Proving You Know Your Subject

The API leak confirmed that Google stores author information as entities and assigns expertise scores. Building expertise signals involves establishing authors with verifiable credentials, consistently publishing in-depth content on your niche topics, earning recognition through citations, speaking engagements, and industry publications, and maintaining detailed author pages with links to authoritative profiles.

Authoritativeness: Being the Go-To Source

Authoritativeness is built through external validation: backlinks from authoritative sources, brand mentions, media coverage, citations in academic or industry publications, and inclusion in trusted directories. The API’s “siteAuthority” metric combines these signals into a comprehensive authority score that influences rankings across all of a domain’s pages.

Trustworthiness: The Foundation of Everything

Trustworthiness is the most critical E-E-A-T component, serving as the foundation upon which the other three pillars stand. Trust signals include HTTPS implementation, transparent business information, clear editorial policies, accurate content with proper source attribution, positive user reviews, and compliance with privacy regulations. For YMYL topics, Google applies heightened trust requirements.

Schema.org Markup as a Ranking Factor

While schema markup is not a direct ranking factor in the traditional algorithm, its indirect effects on rankings are substantial and well-documented. Schema markup influences rankings through several mechanisms.

Rich Results and CTR Improvement

Pages with rich results (star ratings, FAQ dropdowns, how-to steps, event details, product pricing) achieve significantly higher click-through rates than standard blue links. Studies consistently show CTR improvements of 20–40% for pages with rich results. Since NavBoost tracks and rewards higher CTR, this creates a direct pathway from schema implementation to improved rankings.

AI System Understanding

Schema markup provides machine-readable context that helps both traditional search algorithms and AI systems understand your content. For generative AI search results, structured data is critical for being cited and referenced accurately. Implementing comprehensive schema types — including BlogPosting, FAQPage, HowTo, Product, LocalBusiness, and Organization schemas — ensures that AI systems can accurately interpret and present your information.

Knowledge Graph Integration

Proper entity markup (Organization, Person, Place) helps your brand or content become part of Google’s Knowledge Graph, which powers knowledge panels and entity-based search features. Knowledge Graph integration provides significant visibility advantages and reinforces your brand’s authority and trustworthiness.

Voice Search and Conversational Query Optimization

Voice search has become an increasingly important consideration for SEO as smart speakers, voice assistants, and mobile voice search continue to grow. Voice queries tend to be longer, more conversational, and more question-oriented than typed queries. To optimize for voice search, structure your content to directly answer common questions in your niche, use natural conversational language, target long-tail keyword phrases that mirror how people actually speak, and implement FAQ schema markup that provides concise answers to specific questions.

Google’s featured snippets are the primary source for voice search answers. When Google Assistant, Siri, or Alexa reads a search result aloud, it typically draws from the featured snippet or position zero result. Structuring your content with clear question-and-answer formats, using proper heading tags for questions, and providing concise, authoritative answers in the first paragraph below each heading maximizes your chances of capturing these voice search placements. This optimization also benefits GEO (Generative Engine Optimization) since AI systems use similar content extraction patterns when generating responses.

International and Multilingual SEO Considerations

For businesses targeting multiple countries or languages, international SEO factors add another layer of complexity. Hreflang tags tell search engines which language and geographic versions of a page exist, preventing duplicate content issues and ensuring users see the correct version. Country-code top-level domains (ccTLDs like .co.uk or .com.au) provide the strongest geographic signal but require separate domain management. Subdirectories (/en/, /fr/) and subdomains (en.example.com) offer alternative approaches with different trade-offs between signal strength and management complexity.

Server location also influences international rankings. While CDNs have reduced the importance of physical server proximity, hosting your site on servers located in your target market can still provide a marginal advantage for local search results. Google Search Console’s International Targeting tool and Bing Webmaster Tools’ geo-targeting features allow you to specify which countries specific sections of your site are intended for, providing additional geographic signals to supplement other international SEO efforts.

How to Prioritize Ranking Factors for Your Website

With over 200 ranking factors to consider, prioritization is essential. Not every factor matters equally for every website, and the most effective SEO strategy focuses resources on the factors that will move the needle most for your specific situation.

Start with a Comprehensive Audit

Before optimizing anything, conduct a thorough audit of your current SEO performance. Use Google Search Console to identify technical issues, analytics tools to understand user behavior, and backlink analysis tools to evaluate your link profile. This audit will reveal your biggest opportunities and most urgent problems.

Build the Foundation First

Technical SEO is the foundation: ensure your site is fast, mobile-friendly, secure, and crawlable before investing heavily in content or link building. Fix any critical issues like broken links, missing canonical tags, slow loading times, and crawl errors. These foundational elements create the infrastructure necessary for all other ranking factors to function.

Develop a Content-First Strategy

With the foundation in place, focus on creating content that genuinely serves your audience. Develop topic clusters around your core expertise areas, publish comprehensive guides that demonstrate E-E-A-T, and update existing content regularly to maintain freshness. Content quality is the highest-weighted factor category, and it also generates the user engagement signals and natural backlinks that feed other ranking factors.

Build Authority Systematically

Authority building is a long-term process that combines link acquisition, brand building, and reputation management. Focus on earning links from relevant, authoritative sources through original research, data-driven content, expert commentary, and strategic partnerships. Invest in brand visibility through social media, industry events, and media relations. Every piece of the authority-building puzzle reinforces every other piece.

Need help optimizing your website for these ranking factors?

Our team of SEO specialists can audit your site and build a strategy targeting the factors that matter most for your business.

Get a Free SEO Audit →Frequently Asked Questions About Search Engine Ranking Factors

How many ranking factors does Google actually use?

Google is believed to use over 200 distinct ranking factors, though the exact number has never been officially confirmed. The 2024 API documentation leak revealed over 14,000 ranking features and modules, suggesting the actual system is far more complex than the commonly cited “200 factors.” Many of these features are sub-components or variations of broader factors. Google VP Pandu Nayak has described the ranking system as a collection of interconnected systems rather than a single monolithic algorithm, with major components including NavBoost (user behavior), PageRank (links), RankBrain (machine learning), BERT (language understanding), and MUM (multimodal understanding).

Does Google use click data as a ranking factor?

Yes. The 2024 Google API leak and sworn testimony from Google VP Pandu Nayak confirmed that Google uses click data through its NavBoost system. NavBoost processes 13 months of user click data and classifies interactions into “good clicks,” “bad clicks,” and “last longest clicks.” High click-through rates and long dwell times are positive signals, while pogo-sticking (quickly returning to search results) is a negative signal. Google also uses Chrome browser data including time on page, clicks, and browsing patterns to inform rankings.

How do Bing’s ranking factors differ from Google’s?

Bing differs from Google in several key ways: (1) Bing openly uses social signals (likes, shares, engagement) as a direct ranking factor, while Google denies this; (2) Bing places more weight on exact-match keywords in metadata and content; (3) Bing considers meta descriptions a ranking factor, while Google uses them primarily for display; (4) Bing does not use mobile-first indexing, maintaining a single index for all devices; (5) Bing more strongly favors older domains and .edu/.gov TLDs; (6) Bing places greater emphasis on multimedia content; and (7) Bing developed the IndexNow protocol for faster content indexing.

What is the single most important ranking factor in 2026?

Content quality and relevance to search intent is widely considered the single most important ranking factor in 2026. According to First Page Sage’s research, consistent publication of satisfying content accounts for approximately 23% of Google’s algorithm weight — more than any other individual factor. However, it is important to understand that ranking factors work synergistically. Excellent content alone will not rank if your site has severe technical issues or zero backlinks. The most effective SEO strategies address content quality, technical health, backlink authority, and user experience simultaneously.

Can negative SEO from competitors actually hurt my rankings?

While Google has stated that its algorithms are designed to ignore most negative SEO attacks, the reality is more nuanced. Acquiring thousands of spammy backlinks pointing to a competitor’s site can potentially trigger algorithmic scrutiny, particularly for smaller websites with weaker existing link profiles. Google’s Disavow tool was specifically created to address this concern. The best defense against negative SEO is building a strong, diverse, and natural backlink profile that makes any spammy additions statistically insignificant, combined with regular backlink monitoring and prompt disavowing of suspicious links.

Bibliography and Sources

The following sources were consulted in the research and writing of this guide. All claims about ranking factors are attributed to the most authoritative available sources, including official Google documentation, confirmed API documentation, sworn testimony, and peer-reviewed SEO research.

Dean, Brian. “Google’s 200 Ranking Factors: The Complete List (2026).” Backlinko, 2026, backlinko.com/google-ranking-factors.

First Page Sage. “The Google Algorithm Ranking Factors.” First Page Sage, 2025, firstpagesage.com/seo-blog/the-google-algorithm-ranking-factors/.

Goodwin, Danny. “Google API Leak: What the Documents Reveal About Search Ranking.” Search Engine Journal, May 2024, searchenginejournal.com.

Google. “How Google Search Works.” Google Search Central, 2026, developers.google.com/search/docs/fundamentals/how-search-works.

Google. “Search Quality Evaluator Guidelines.” Google Search Central, 2025, developers.google.com/search/docs/fundamentals/creating-helpful-content.

Hobo Web. “Navboost: How Google Uses Large-Scale User Interaction Data to Rank Websites.” Hobo Web, 2024, hobo-web.co.uk/navboost-how-google-uses-large-scale-user-interaction-data-to-rank-websites/.

Ignite Visibility. “How Is Bing SEO Different than Google SEO?” Ignite Visibility, 2025, ignitevisibility.com/how-is-bing-seo-different-than-google-seo/.

King, Rand. “Google’s API Leak: A Comprehensive Analysis of Confirmed Ranking Factors.” GrowFusely, 2024, growfusely.com/blog/google-api-leak/.

Microsoft. “How Bing Delivers Search Results.” Microsoft Support, 2025, support.microsoft.com/en-us/topic/how-bing-delivers-search-results.

Radd Interactive. “Bing’s Ranking Factors & Algorithm: How to Rank on Bing.” Radd Interactive, 2024, raddinteractive.com/bings-ranking-factors-algorithm-how-to-rank-on-bing/.

Schwartz, Barry. “Everything We Know About the Google Algorithm Leak.” Search Engine Roundtable, May 2024, seroundtable.com.

Semetrical. “Bing vs Google: Ranking Factor Comparison.” Semetrical, 2025, semetrical.com/bing-vs-google-ranking-factor-comparison/.

SEO.com. “Google Penalties: A Complete Guide.” SEO.com, 2025, seo.com/basics/how-search-engines-work/google-penalties/.

Sullivan, Danny. “How Google’s Helpful Content System Works.” Google Blog, 2024, blog.google.

go-seo.com. “200 Google Ranking Factors Explained Simply: The Complete Guide Heading into 2025.” Go-SEO, 2024, go-seo.com/200-google-ranking-factors-explained-simply-the-complete-guide-heading-into-2025/.

Ready to Dominate Search Rankings?

Our team at Digital Marketing Co. specializes in optimizing every ranking factor that matters. From technical SEO audits to content strategy and link building, we build comprehensive campaigns that deliver measurable results.

Contact Our Team → View SEO Services